Erika Andersen, Forbes

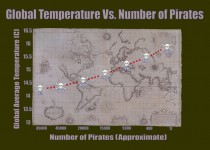

It’s true. This extremely scientific graph proves it:

You can see that as the number of pirates in the world has decreased over the past 130 years, global warming has gotten steadily worse. In fact, this makes it entirely clear that if you truly want to stop global warming, the most impactful thing to do is - become a pirate.

Hope you’re laughing. My husband told me this wonderful premise a few months ago, and I couldn’t resist sharing it with you, for a very specific reason. I’m fascinated by why it’s so funny. I believe it’s because it’s an only slightly more extreme version of the fake logic we hear every day - the conclusions that pass for critical thinking in these days of completely unleashed 24-7 communication. For example:

Someone who has cancer drinks gallons of lemon water and their cancer goes into remission: they create a website to talk about how lemon water cures cancer.

A business is doing badly and they move to a new building and things start to pick up: the CEO writes a book about how changing your environment is the key to success.

Statistics show that people who leave their jobs after less than a year are more likely to smoke: someone starts a campaign to reduce smoking by encouraging people to stay at their jobs longer.

My older sister, a very wise and smart woman who is a political scientist at Syracuse University, teaches a statistics class to freshmen, where she endeavors to teach them critical thinking. She talks about this as being the most common error in logic: confusing simultaneity with causality. In other words, assuming that because two things are happening at the same time, they exist in a cause and effect relationship with each other.

Because anyone can say anything anywhere these days (pretty much), there’s a lot of fuzzy thinking floating around that seems more legitimate than it would have in former times because it’s in print. Now, don’t get me wrong: I’m a huge proponent of free speech. I just feel we all have to be more discriminating than ever before about what we believe. Not cynical or negative: discriminating.

So, when someone proposes a cause and effect relationship between two things - reduction in pirates causing global warming; Obama creating the global economic crisis; young people ruining American business - ask for the data that shows they’re related, rather than simply that they’re happening at the same time.

But if you’re dead set on becoming a pirate, I’m not going to stop you.

By P Gosselin

By Frank Bosse and Fritz Vahrenholt

(Translated/edited by P Gosselin)

In November our sun was once again below normal in activity. The 84th month since the current solar cycle started in December 2008 saw a solar sunspot number (SSN) of 63.2, which was 72% of what is the mean for month 84 into a cycle since observations began in 1755.

Figure 1: Our current solar cycle (SC) 24 (red) compared to the mean cycle (blue) of the previous 23 cycles. The current cycle over the past year or so as been very similar to solar cycle number 5 (black) which occurred from 1798 to 1810.

What follows is a comparison of all cycles:

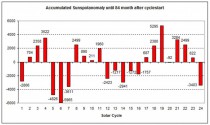

Figure 2: The accumulated monthly deviations anomaly from the mean value (blue curve in Figure 1) for each cycle.

The current solar cycle 24 is weak compared to the previous cycles beginning with solar cycle 18 (1945). The books are practically closed for the current cycle as it is not expected to become more active and activity is expected to trail off. We are experiencing the weakest solar cycle since the Dalton Minimum 1790-1830, which involved solar cycles 5, 6 and 7.

What’s ahead?

For estimating the solar sunspot activity of the next upcoming cycle, observing the polar fields during times of activity minima provides strong indications. We reported on this here. So what can we expect some three years before the awaited minimum?

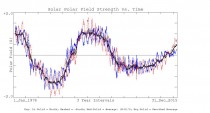

Figure 3: The polar fields of the sun since 1976. (Source: stanford.edu)

Early indications of a modestly active solar cycle 25

Especially the south polar field (show in red in Figure 3) is beginning to show signs of strengthening a little, yet is still behind the values of the very active cycles that occurred during the second half of the 20th century. This could be an indication that solar cycle number 25 may not be much weaker than the current cycle, but also not stronger. We will know more in about 3 years.

Craig Idso, Robert M. Carter, S. Fred Singer

The most important fact about climate science, often overlooked, is that scientists disagree about the environmental impacts of the combustion of fossil fuels on the global climate. There is no survey or study showing “consensus” on the most important scientific issues, despite frequent claims by advocates to the contrary.

Scientists disagree about the causes and consequences of climate for several reasons. Climate is an interdisciplinary subject requiring insights from many fields. Very few scholars have mastery of more than one or two of these disciplines. Fundamental uncertainties arise from insufficient observational evidence, disagreements over how to interpret data, and how to set the parameters of models. The Intergovernmental Panel on Climate Change (IPCC), created to find and disseminate research finding a human impact on global climate, is not a credible source. It is agenda-driven, a political rather than scientific body, and some allege it is corrupt. Finally, climate scientists, like all humans, can be biased. Origins of bias include careerism, grant-seeking, political views, and confirmation bias.

Probably the only “consensus” among climate scientists is that human activities can have an effect on local climate and that the sum of such local effects could hypothetically rise to the level of an observable global signal. The key questions to be answered, however, are whether the human global signal is large enough to be measured and if it is, does it represent, or is it likely to become, a dangerous change outside the range of natural variability? On these questions, an energetic scientific debate is taking place on the pages of peer-reviewed science journals.

In contradiction of the scientific method, IPCC assumes its implicit hypothesis - that dangerous global warming is resulting, or will result, from human-related greenhouse gas emissions - is correct and that its only duty is to collect evidence and make plausible arguments in the hypothesis’s favor. It simply ignores the alternative and null hypothesis, amply supported by empirical research, that currently observed changes in global climate indices and the physical environment are the result of natural variability.

The results of the global climate models (GCMs) relied on by IPCC are only as reliable as the data and theories “fed” into them. Most climate scientists agree those data are seriously deficient and IPCC’s estimate for climate sensitivity to CO2 is too high. We estimate a doubling of CO2 from pre-industrial levels (from 280 to 560 ppm) would likely produce a temperature forcing of 3.7 Wm-2 in the lower atmosphere, for about ~1C of prima facie warming. The recently quiet Sun and extrapolation of solar cycle patterns into the future suggest a planetary cooling may occur over the next few decades.

In a similar fashion, all five of IPCC’s postulates, or assumptions, are readily refuted by real-world observations, and all five of IPCC’s claims relying on circumstantial evidence are refutable. For example, in contrast to IPCC’s alarmism, we find neither the rate nor the magnitude of the reported late twentieth century surface warming (1979-2000) lay outside normal natural variability, nor was it in any way unusual compared to earlier episodes in Earth’s climatic history. In any case, such evidence cannot be invoked to “prove” a hypothesis, but only to disprove one. IPCC has failed to refute the null hypothesis that currently observed changes in global climate indices and the physical environment are the result of natural variability.

Rather than rely exclusively on IPCC for scientific advice, policymakers should seek out advice from independent, nongovernment organizations and scientists who are free of financial and political conflicts of interest. NIPCC’s conclusion, drawn from its extensive review of the scientific evidence, is that any human global climate impact is within the background variability of the natural climate system and is not dangerous.

In the face of such facts, the most prudent climate policy is to prepare for and adapt to extreme climate events and changes regardless of their origin. Adaptive planning for future hazardous climate events and change should be tailored to provide responses to the known rates, magnitudes, and risks of natural change. Once in place, these same plans will provide an adequate response to any human-caused change that may or may not emerge.

Policymakers should resist pressure from lobby groups to silence scientists who question the authority of IPCC to claim to speak for “climate science.” The distinguished British biologist Conrad Waddington wrote in 1941 (Waddington, C.H. 1941. The Scientific Attitude. London, UK: Penguin Books),

It is… important that scientists must be ready for their pet theories to turn out to be wrong. Science as a whole certainly cannot allow its judgment about facts to be distorted by ideas of what ought to be true, or what one may hope to be true (Waddington, 1941).

This prescient statement merits careful examination by those who continue to assert the fashionable belief, in the face of strong empirical evidence to the contrary, that human CO2 emissions are going to cause dangerous global warming.

Patrick Michaels

Are political considerations superseding scientific ones at the National Oceanic and Atmospheric Administration?

When confronted with an obviously broken weather station that was reading way too hot, they replaced the faulty sensors but refused to adjust the bad readings it had already taken. And when dealing with “the pause” in global surface temperatures that is in its 19th year, the agency threw away satellite-sensed sea-surface temperatures, substituting questionable data that showed no pause.

The latest kerfuffle is local, not global, but happens to involve probably the most politically important weather station in the nation, the one at Washington’s Reagan National Airport.

I’ll take credit for this one. I casually noticed that the monthly average temperatures at National were departing from their 1981-2010 averages a couple of degrees relative to those at Dulles in the warm direction.

Temperatures at National are almost always higher than those at Dulles, 19 miles away. That’s because of the well-known urban warming effect, as well as an elevation difference of 300 feet. But the weather systems that determine monthly average temperature are, in general, far too large for there to be any significant difference in the departure from average at two stations as close together as Reagan and Dulles. Monthly data from recent decades bear this out until, all at once, in January 2014 and every month thereafter, the departure from average at National was greater than that at Dulles.

The average monthly difference for January 2014 through July 2015 is 2.1 degrees Fahrenheit, which is huge when talking about things like record temperatures. For example, National’s all-time record last May was only 0.2 degrees above the previous record.

Earlier this month, I sent my findings to Jason Samenow, a terrific forecaster who runs the Washington Post’s weather blog, Capital Weather Gang. He and his crew verified what I found and wrote up their version, giving due credit and adding other evidence that something was very wrong at National. And, in remarkably quick action for a government agency, the National Weather Service swapped out the sensor within a week and found that the old one was reading 1.7 degrees too high. Close enough to 2.1, the observed difference.

But the National Weather Service told the Capital Weather Gang that there will be no corrections, despite the fact that the disparity suddenly began 19 months ago and varied little once it began. It said correcting for the error wouldn’t be “scientifically defensible.” Therefore, people can and will cite the May record as evidence for dreaded global warming with impunity. Only a few weather nerds will know the truth. Over a third of this year’s 37 90-degree-plus days, which gives us a remote chance of breaking the all time record, should also be eliminated, putting this summer rightly back into normal territory.

It is really politically unwise not to do a simple adjustment on these obviously-too-hot data. With all of the claims that federal science is being biased in service of the president’’s global-warming agenda, the agency should bend over backwards to expunge erroneous record-high readings.

In July, by contrast, NOAA had no problem adjusting the global temperature history. In that case, the method they used guaranteed that a growing warming trend would substitute for “the pause.” They reported in Science that they had replaced the pause (which shows up in every analysis of satellite and weather balloon data) with a significant warming trend.

Normative science says a trend is “statistically significant” if there’s less than a 5 percent probability that it would happen by chance. NOAA claimed significance at the 10 percent level, something no graduate student could ever get away with. There were several other major problems with the paper. As Judy Curry, a noted climate scientist at Georgia Tech, wrote, “color me ‘unconvinced.’”

Unfortunately, following this with the kerfuffle over the Reagan temperature records is only going to “convince” even more people that our government is blowing hot air on global warming.

Patrick Michaels is director of the Center for the Study of Science at the Cato Institute.

Steve Goddard, Real Science

Update: See this excellent summary by Francis Menton on The Greatest Scientific Fraud Of All Time—Part VI

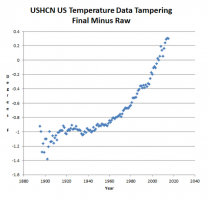

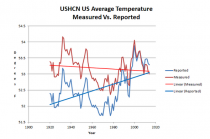

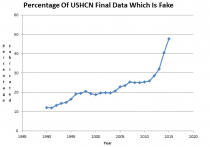

The measured US temperature data from USHCN shows that the US is on a long-term cooling trend. But the reported temperatures from NOAA show a strong warming trend.

ScreenHunter_10009 Jul. 27 12.16

Enlarged

Measured : ushcn.tavg.latest.raw.tar.gz

Reported : ushcn.tavg.latest.FLs.52j.tar.gz

They accomplish this through a spectacular hockey stick of data tampering, which corrupts the US temperature trend by almost two degrees.

The biggest component of this fraud is making up data. Almost half of all reported US temperature data is now fake. They fill in missing rural data with urban data to create the appearance of non-existent US warming.

ScreenHunter_10010 Jul. 27 12.20

The depths of this fraud is breathtaking, but completely consistent with the fraudulent profession which has become known as “climate science”.

---------

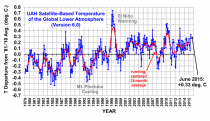

For years, climate scientists have been debating the “hiatus” in global warming, pushing dozens of explanations for why global temperatures had not risen significantly in the last decade or so in the surface record and for the last two decades in the satellite record. but the debate was cut short in June when the National Oceanic and Atmospheric Administration published a study claiming the “hiatus” never existed.

“Newly corrected and updated global surface temperature data from NOAA’s [National Centers for Environmental Information] do not support the notion of a global warming ‘hiatus,’” wrote NOAA scientists in their study.

The study was highly criticized for inflating the temperature record since the late 1990s to show vastly more global warming than was shown in older data. The warming “hiatus” was eliminated and the warming trend over the period was more than doubled.

“There’s been so much criticism of NOAA’s alteration of the sea surface temperature that we are really just going to have to use the University of East Anglia data,” Pat Michaels, a climate scientist with the libertarian Cato Institute, told The Daily Caller News Foundation.

“I don’t think that’s going to stand the test of time,” Michaels said of NOAA’s recent adjustments.

But what Michaels and others say is more problematic is the growing divergence between NOAA’s new temperature data versus satellite data and records from the UK Met Office. NOAA’s data shows significantly more warming than Met Office or satellite records.

“It’s a major problem because outside of the north polar region, the upper troposphere is supposed to warm faster than the surface,” Michaels said.

“Pretty much every projection made by our climate models for sensible weather is simply not at all trustworthy,” Michaels said.

Anthony Sadar

The U.S. Environmental Protection Agency is apparently operating under the control of President Obama’s leftist ideology. There is little doubt about this as the president’s hand-picked Environmental Protection Agency administrator, Gina McCarthy, has basically told professional audiences that she is doing the bidding of her boss. What may surprise folks is that this is not inappropriate with respect to how the EPA was initially established by President Richard Nixon. Relative to the advancement of the country’s economic, environmental and public health, and the well-being of objective scientific practice itself, an ideology-driven EPA is quite inappropriate.

In his new book “Environmentalism Gone Mad: How a Sierra Club Activist and Senior EPA Analyst Discovered a Radical Green Energy Fantasy,” Alan Carlin explains that the EPA “reports directly to the president and thus has no independence from the executive branch like some regulatory agencies. This means that if an administration wants to use its power to determine regulations, it can impose exactly what it wishes to do subject only to the Congressional Review Act and Congress’ powers of appropriations, both of which have proved ineffective so far in preventing Obama from doing what he wants with regard to EPA.”

Mr. Carlin was at the EPA almost from its inception in 1970. He came from research work at the RAND Corp. in Santa Monica, Ca., to work with the EPA in Washington, D.C. from 1971 to 2010. In early 2009, after submitting serious negative comments on the EPA’s draft technical support document for the endangerment finding on the adverse effects of increasing levels of atmospheric greenhouse gases, Mr. Carlin had been maligned by the EPA powers-that-be for challenging the Obama administration’s poor economics and science represented in these findings. Yet, as an EPA senior analyst with an undergraduate degree in physics from Caltech bolstered by a doctorate in economics from MIT, Mr. Carlin surely knows his stuff.

He asserts that even if EPA’s current effort to control carbon-dioxide emissions are successful, “it will not change the climate or extreme weather in any measurable way even though Obama has proclaimed it will. It will simply increase the rates paid for less reliable energy, with lower-income Americans bearing most of the burden along with the slow recovery of the U.S. economy.”

Throughout his lengthy personal recounting in “Environmentalism Gone Mad” of the rise and fall of EPA adherence to science over politics, Mr. Carlin engages the reader with essential details. These include not only an insider’s perspective on the operation of the EPA but also numerous, specific and sensible short-term and long-term recommendations on how to “get out of this mess” - a mess largely brought about by the current administration’s adherence to radical leftist environmentalism. The need to consider reasonable costs versus benefits in air quality rules, as exemplified in the recent Supreme Court decision in Michigan v. Environmental Protection Agency, is a move encouraged by Mr. Carlin.

Good economics and science require a broad perspective, yet when politics and financial control dominate the mix of viewpoints, the climate changes, and usually in an ominous way. Mr. Carlin expresses it in one of his long-term reform recommendations to reduce incentives for EPA managers to follow the administration: “Besides the normal bureaucratic controls, the pay of all EPA executives and senior analysts [is] directly determined by Congress and the president. This is unlikely to lead to independent action or thought by these crucial civil service employees. Yet independent analysis is desperately needed if EPA is to reflect good science and economics rather than science determined by their political masters.”

Without a doubt, “Environmentalism Gone Mad” is an important book that provides well-informed personal insight into the convoluted world of calamitous climate science promoted by what Mr. Carlin calls the “climate-industrial complex” or “CIC.” The CIC includes the science elites, mainstream media, environmental groups, leftist politicians and bureaucratic administrators, “green” energy and fuel producers and promoters, PR myth-makers (like those labeling knowledgeable skeptics as “deniers"), and others who profit financially, professionally and personally from foisting a future climate fantasy on a unwary public.

Mr. Carlin observes: “If governments simply stayed out of energy decisions not involving government-owned resources, urgent national security objectives, or actual proven pollution problems and let the markets decide how to meet energy needs, everyone except the CIC would be much better off, including the environment.”

Ratepayers and all taxpayers would do well to educate themselves on the inefficient, sometimes unscrupulous, and perhaps often counterproductive actions of those obstructing the goal of good, clean and affordable domestic energy. “Environmentalism Gone Mad” is a good first step in this essential education.

Anthony J. Sadar, a certified consulting meteorologist, is the author of “In Global Warming We Trust: A Heretic’s Guide to Climate Science” (Telescope Books, 2012).

James Spann

Update: As Professor Judith Curry adds (note it applies to the media as well as the warmists in the government or universities):

Enlarged

----------

I have been a professional meteorologist for 36 years. Since my debut on television in 1979, I have been an eyewitness to the many changes in technology, society, and how we communicate. I am one who embraces change, and celebrates the higher quality of life we enjoy now thanks to this progress.

But, at the same time, I realize the instant communication platforms we enjoy now do have some negatives that are troubling. Just a few examples in recent days…

I would say hundreds of people have sent this image to me over the past 24 hours via social media.

Comments are attached… like “This is a cloud never seen before in the U.S."..."can’t you see this is due to government manipulation of the weather from chemtrails"… “no doubt this is a sign of the end of the age”.

Let’s get real. This is a lenticular cloud. They have always been around, and quite frankly aren’t that unusual (although it is an anomaly to see one away from a mountain range). The one thing that is different today is that almost everyone has a camera phone, and almost everyone shares pictures of weather events. You didn’t see these often in earlier decades because technology didn’t allow it. Lenticular clouds are nothing new. But, yes, they are cool to see.

No doubt national news media outlets are out of control when it comes to weather coverage, and their idiotic claims find their way to us on a daily basis.

The Houston flooding is a great example. We are being told this is “unprecedented”...Houston is “under water”...and it is due to manmade global warming.

Yes, the flooding in Houston yesterday was severe, and a serious threat to life and property. A genuine weather disaster that has brought on suffering.

But, no, this was not “unprecedented”. Flooding from Tropical Storm Allison in 2001 was more widespread, and flood waters were deeper. There is no comparison. In fact, many circulated this image in recent days, claiming it is “Houston underwater” from the flooding of May 25-26, 2015. The truth is that this image was captured in June 2001 during flooding from Allison.

Flood events in 2009, 2006, 1998, 1994, 1989, 1983, and 1979 brought higher water levels to most of Houston, and there were many very serious flood events before the 1970s.

On the other issue, the entire climate change situation has become politicized, which I hate. Those on the right, and those on the left hang out in “echo chambers”, listening to those with similar world views refusing to believe anything else could be true.

Everyone knows the climate is changing; it always has, and always will. I do not know of a single “climate denier”. I am still waiting to meet one.

The debate involves the anthropogenic impact, and this is not why I am writing this piece. Let’s just say the Houston flood this week is weather, and not climate, and leave it at that.

I do encourage you to listen to the opposing point of view in the climate debate, but be sure the person you hear admits they can be wrong, and has no financial interest in the issue. Unfortunately, those kind of qualified people are very hard to find these days. It is also hard to find people that discuss climate without using the words “neocon” and “libtard”. I honestly can’t stand politics; it is tearing this nation apart.

Back to my point...many professional meteorologists feel like we are fighting a losing battle when it comes to national media and social media hype and disinformation. They will be sure to let you know that weather events they are reporting on are “unprecedented”, there are “millions and millions in the path”, it is caused by a “monster storm”, and “the worst is yet to come” since these events are becoming more “frequent”.

You will never hear about the low tornado count in recent years, the lack of major hurricane landfalls on U.S. coasts over the past 10 years, or the low number of wildfires this year. It doesn’t fit their story. But, never let facts get in the way of a good story… there will ALWAYS be a heat wave, flood, wildfire, tornado, tyhpoon, cold wave, and snow storm somewhere. And, trust me, they will find them, and it will probably lead their newscasts. But, users beware…

Dr. Larry Bell

Major threats to America’s security are heating up all over the world, but not due to global warming. Nevertheless, we wouldn’t know that according to statements and actions of top Obama administration leaders.

Speaking to Coast Guard Academy graduates on May 20, the president said: “The threat of changing climate cuts to the very core of your service,” adding that “climate change constitutes a serious threat to global security and immediate risk to our national security.”

This echoes the view of Secretary of State John Kerry who has described climate change as “the biggest challenge we face right now.” To support this claim, both he and Obama have cited unprecedented storms, unprecedented hurricanes, unprecedented droughts, unprecedented fires ....everything it seems but an unprecedented lack of simple fact checking.

A reality check would also reveal another inconvenient truth. Despite rising atmospheric CO2 concentrations, that rocketing off-the-chart warming predicted by theoretical climate models has flared out. Although everyone I know recognizes that climate really does change, it just hasn’t done so lately. Satellite recordings show that global temperatures have actually been flat over the past 18 years and counting.

So what information earned climate change the distinction of constituting an epic security threat warranting military preparedness?

That feverishly overheated policy priority originated with a warning by the U.N.’s Intergovernmental Panel on Climate Change (IPCC) that global warming would melt the massive Himalayan ice mass. This would flood rivers vital to agriculture which would later dry up as glaciers retreated.

The climatological calamity would cause mass migrations of millions of people from lowland Bangladesh across national borders, with militaries (including ours) becoming involved.

As IPCC finally admitted, that scenario was entirely fabricated without a shred of supporting science by a fellow who worked for its director. Nevertheless, in 2007 Senate Armed Services Committee members Hillary Clinton and Republican John Warner snuck some of that message into an amendment of the National Defense Authorization Act, which got our military into the climate protection business, whether they wanted to be or not.

Despite no credible evidence that a climate crisis, much less, any human-caused one, actually exists, a 2010 Department of Defense Quadrennial Defense Review (QDR) has ordained that climate change will play a “significant role in shaping the future security environment.” Accordingly, considerations of threats posed by climate change are now mandated to be incorporated into DOD’s long-range strategic plans.

Former Chief of Naval Operations Adm. Thomas Hayward strongly laments that development. He told me in a 2012 Forbes.com interview: “Despite the large number of scientific organizations within DOD and the military services, none has challenged IPCC’s flawed science of climate change, when in fact, available climate science literature is replete with peer reviewed research contradicting their assertion that man is primarily responsible.”

Hayward further pointed out that this woe begotten folly is adding unwarranted costs to defense budgets and operations at a time when important programs that really do benefit America’s security interests are being sacrificed as austerity measures.

For example, as we reduce second-strike sea and air deterrence capabilities in an Obama dream world without nuclear weapons, Russia, China, and rogue nations such as North Korea and Iran continue to expand first-strike arsenals with determination and impunity.

By 2018, the Navy will reduce the number of deployed and non-deployed submarine-launched ballistic nuclear missiles from 336 to 280. In fact, some of the missile tubes aboard the Navy’s 14 Ohio-class ballistic submarines will be purposefully altered to prevent ballistic missile launches.

The Air Force is trimming its bomber fleet from 93 to 60, including the 19B-2 stealth bomber. In addition, fifty of our 450 Minuteman III missiles will be removed from silos and stored. The remaining 400 will constitute the lowest number since 1962 when America had 203. That total was rapidly expanded following the Cuban missile crisis.

In 2009, the White House reneged on promises to the Czech Republic to establish a missile defense site in Poland to appease Russia’s vehement opposition. Incidentally, that cancelled Polish missile defense installation would also have afforded some protection for America against Iranian and North Korean nuclear EMP strikes launched over the South Pole . . . true threats discussed in my two previous columns.

And just how do those world and national security threats posed by long-term global warming compare with still another somewhat more immediate risk . . . the accelerating spread of an ISIS caliphate beyond Syria and Iraq, with affiliates in Algeria, Egypt, and Libya?

Whereas the president has branded “climate change deniers” as dangerous threats to our future, we will be safer with more of his attention directed to some other deniers - those who cut off heads and burn people alive for not believing as they do.

Larry Bell is an endowed professor of space architecture at the University of Houston where he founded the Sasakawa International Center for Space Architecture (SICSA) and the graduate program in space architecture. He is the author of ”Scared Witless: Prophets and Profits of Climate Doom”(2015) and ”Climate of Corruption: Politics and Power Behind the Global Warming Hoax” (2012).

These are excellent books and make wonderful gifts. I enjoyed them. I have also been impressed by many of the books in the Icecap Amazon Book Store. Two others you might find enlightening are Confessions of a Greenpeace Dropout by Dr. Patrick Moore and Environmentlism Gone Mad by former EPA climate scientist/economist Alan Carlin. I am reading the latter now. I am very impressed. See a review by Alan Caruba here. These people ooze credibility because of where they have been - on the inside seeing how the green movement turned watermelon—green on the outside but red on the inside.