Dec 22, 2024

Cautious Optimism On The Demise Of The Green Energy Fantasy

https://www.manhattancontrarian.com/blog/2024-12-21-cautious-optimism-on-the-demise-of-the-green-energy-fantasy

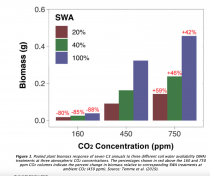

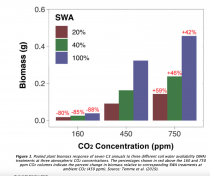

Icecap Note: There are numerous sites on the web. that cover the insanity of the climate issue and forced demise of the reliable fossil fuel and nuclear energies. The. Manhattan Contrarian is a site you should follow and be sure to look through the long library of very informative for your consideration. Also a site with reviews of many papers that present the many benefits of CO2 is CO2 Science here

It has been obvious now for many years to the numerate that the fantasy future powered by wind and sun is not going to happen. Sooner or later, reality will inevitably intrude. And yet, the fantasy has gone on for far longer than I ever would have thought possible. Hundreds of billions of dollars of government largesse have been a big part of the reason, going not just to green energy developers but also to academic charlatans and environmental NGOs to fan the flames of climate alarm.

It was three years ago, in December 2021, that I asked the question, “Which Country Or U.S. State Will Be The First To Hit The Green Energy Wall?” The “green energy wall” would occur when addition of wind and solar generators to the grid could no longer continue, either due to regular blackouts or soaring costs or both. Candidates for first to hit the wall considered in that post included California, New York, Germany and the UK. I wrote then:

All these places, despite their wealth and seeming sophistication, are embarking on their ambitious plans without ever having conducted any kind of detailed engineering study of how their new proposed energy systems will work or how much they will cost… As these jurisdictions ramp up their wind and solar generation, and gradually eliminate the coal and natural gas, sooner or later one or another of them is highly likely to hit a “wall” - that is, a situation where the electricity system stops functioning, or the price goes through the roof, or both, forcing a drastic alteration or even abandonment of the whole scheme.

Three years on, it looks like Germany is winning the race to the wall. After a couple of decades of “Energiewende,” Germany has closed all of its nuclear plants and much of its fossil fuel capacity, with a huge build-out of wind and solar generation. How’s that going? The German site NoTricksZone posts today an English translation of a piece yesterday by Fritz Vahrenholt at the site Klimanachrichten (Climate News). The translated headline is ‘”Two brief periods of wind doldrums and Germany’s power supply reaches its limits.” Excerpt:

From November 2 to November 8 and from December 10 to December 13, Germany’s electricity supply from renewable energies collapsed as a typical winter weather situation with a lull in the wind and minimal solar irradiation led to supply shortages, high electricity imports and skyrocketing electricity prices. At times, over 20,000 MW, more than a quarter of Germany’s electricity requirements, had to be imported. Electricity prices rose tenfold (93.6 ct/kWh).

They avoided blackouts this time (barely) by importing more than a quarter of their electricity during the times of wind/sun drought. But the sudden demands for huge imports caused the spot price of electricity in the markets to soar, affecting not only Germany but also the neighbors who supplied the power. Vahrenholt provides this map indicating the prices reached during the December wind/sun drought:

936.28/MWh is almost $1 per kWh. And that’s a wholesale price; retail would be at least double. By contrast, average U.S. electricity prices are well under $0.20/kWh.

Vahrenholt reasonably attributes the huge price spikes to elimination of reliable nuclear and fossil fuel plants, leaving Germany subject to the vagaries of the wind and sun:

The reason [for the price spikes]: The socialist/green led coalition government and the prior Merkel governments had decommissioned 19 nuclear power plants (30% of Germany’s electricity demand) and 15 coal-fired power plants were taken off the grid on April 1, 2023 alone.

From Wolfgang Grole Entrup, Managing Director of the German Chemical Industry Association:

“It’s desperate. Our companies and our country cannot afford fair-weather production. We urgently need power plants that can step in safely.”

It is also clear from Vahrenholtz’s map how Germany’s sudden surge of demand affected the countries that supplied the imports on short notice - particularly Norway, Sweden, the Netherlands, Denmark, and Austria. Here is the reaction in Norway:

Norway’s energy minister in the center-left government, Terja Aasland, wants to cut the power cable to Denmark and renegotiate the electricity contracts with Germany. He is thus responding to the demands of the right-wing Progress Party, which has been calling for this for a long time and will probably win the next elections. According to the Progress Party, the price infection from the south must be stopped.

And the same from Sweden:

Swedish Energy Minister Ebba Busch was even clearer: “It is difficult for an industrial economy to rely on the benevolence of the weather gods for its prosperity.” And directly to Habeck’s green policy: “No political will is strong enough to override the laws of physics - not even Mr. Habeck’s.”

When the neighbors decline to continue to supply Germany with imports during its wind/sun droughts, then it will be blackouts instead of price spikes. We continue to move slowly toward that inevitability.

In other news from Germany, its auto industry is struggling (also from soaring energy prices, not to mention EV mandates), and its government has just fallen. Economic growth has ground to a halt. This is what the green energy wall looks like. Elections will be held some time in the new year.

I’m feeling cautiously optimistic that the world will wake up from the green energy bad dream before the damage turns to disaster. Our incoming U.S. administration seems to have caught on. Germany, sorry you had to be the guinea pig for this failed experiment.

See also the latest warning New York On The March To Climate Utopia . When will they ever learn.

See on the CO2 Science web site, the benefits of CO2 and dangers of forced reduction here

Dec 13, 2024

Human Health and Welfare Effects from Increased Greenhouse Gases and Warming

John Dunn and David Legates

Claims that global warming will have net negative effects on human health are not supported by scientific evidence. Moderate warming and increased atmospheric concentrations of carbon-dioxide levels could provide net benefits for human welfare, agriculture, and the biosphere by reducing cold-related deaths, increasing the amount of arable land, extending the length of growing seasons, and invigorating plant life. \

The harmful effects of restricting access to fossil fuel energy and subsequently causing energy costs to increase would likely outweigh any potential benefits from slightly delaying any rise in temperatures. Climate change is likely to have less impact on health and welfare than polices that would deprive the poor living in emerging economies of the benefits of abundant and inexpensive energy.

Key Takeaways

A colder climate generally poses a much greater risk to human health and causes more deaths than a warmer climate.

An increase in warmer conditions would not significantly increase the range of vector-borne diseases such as malaria or Lyme disease.

Life expectancy has improved tremendously as a result of access to affordable and reliable energy

**************

The potential for an increase in the health and welfare effects of increasing carbon-dioxide concentrations and the concomitant warming of the climate has become an increasing focus of those concerned about climate change. Some claim that climate change is responsible for an increase in virtually everything that adversely affects human life and that it may also lead to a rapid deterioration of human health and welfare. During the past three decades, a politically-driven pseudo-science has invaded research in toxicology and epidemiology through governmental funding and environmental pressure. These efforts were intended to promote government regulatory activity, including expansion of regulatory controls.

In this Special Report, claims regarding the effects of climate change, rising air temperatures, and increasing carbon-dioxide concentrations will be identified and investigated. The results will show that a slight warming of the planet may make it more habitable and hospitable, that concerns about increases in disease proliferation due to climate change are vastly overstated, and that the expansion of abundant and inexpensive energy through the development of affordable and reliable energy has produced nearly two centuries of human progress and welfare. In particular, some of the policies intended to curb anthropogenically induced climate change may restrict access to affordable and reliable energy and are thus-ironically-harmful to low-income individuals across the world.

See full report here.

Dec 12, 2024

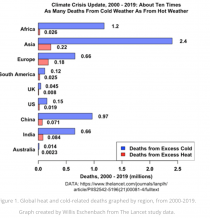

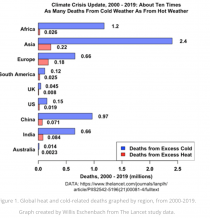

Lancet Study: Cold Kills 85 Times More Than Heat-Related Deaths

Kenneth Richard

A new Lancet study ominously reports that from 2000 to 2019 in England and Wales there were an average of 791 heat-related excess deaths and 60,753 cold-related excess deaths each year. That’s an excess death ratio of about 85 to 1 for cold temperatures.

Adjusted as deaths per 100,000 person-years, the annual ratio is 1.57 heat-related deaths vs. 122.34 cold-related excess deaths throughout the 21st century.

“Our analysis indicates that the excess in mortality attributable to cold was almost two orders of magnitude higher than the excess in mortality attributable to heat.”

Several other new studies report heavily skewed ratios for cold- vs. heat-related excess deaths in the modern climate.

Excess mortality due to cold temperatures was 32 times higher than for heat in Switzerland from 1969-2017.

Schrijver et al., 2022

“Total all-cause excess mortality associated with non-optimal temperatures was 9.19%, which translates to 274,578 temperature-related excess deaths in Switzerland between 1969 and 2017. Cold-related mortality represented a larger fraction in comparison with heat, with 8.91% vs. 0.28% .”

Excess mortality due to cold temperatures was 7.6 times higher than for heat in 326 Latin American cities from 2002 to 2015.

Kephart et al., 2022

“Climate change and urbanization are rapidly increasing human exposure to extreme ambient temperatures, yet few studies have examined temperature and mortality in Latin America. We conducted a nonlinear, distributed-lag, longitudinal analysis of daily ambient temperatures and mortality among 326 Latin American cities between 2002 and 2015. We observed 15,431,532 deaths among 2.9 billion person-years of risk. The excess death fraction of total deaths was 0.67% for heat-related deaths and 5.09% for cold-related deaths.”

Excess mortality due to cold temperatures was 6.8 times higher than for heat in a city in India (Pune) from 2004 to 2012.

Ingole et al., 2022

“We applied a time series regression model to derive temperature-mortality associations based on daily mean temperature and all-cause mortality records of Pune city [India] from year January 2004 to December 2012. The analysis provides estimates of the total mortality burden attributable to ambient temperature. Overall, for deaths registered in the observational period were attributed to non-optimal temperatures, cold effect was greater 5.72% than heat”

Excess mortality due to cold temperatures was 42 times higher than for heat in China in 2019.

Liu et al., 2022

“We estimated that 593 thousand deaths were attributable to non-optimal temperatures in China in 2019 with 580 thousand cold-related deaths and 13 thousand heat-related deaths.”

Excess mortality due to cold temperatures was 46 times higher than for heat in Mexico from 1998-2017.

Cohen and Dechezlepretre, 2022 (full paper)

“We examine the impact of temperature on mortality in Mexico using daily data over the period 1998-2017 and find that 3.8 percent of deaths in Mexico are caused by suboptimal temperature (26,000 every year). However, 92 percent of weather-related deaths are induced by cold (<12 degrees C) or mildly cold (12-20 degrees C) days and only 2 percent by outstandingly hot days (>32 degrees C). Furthermore, temperatures are twice as likely to kill people in the bottom half of the income distribution.”

Excess mortality due to cold temperatures was 12.8 times higher than for heat “across 612 cities within 39 countries over the period 1985-2019.”

Mistry et al., 2022

“Here, we perform a comprehensive assessment of temperature-related mortality risks using ground weather stations observations and state-of-the-art reanalysis data across 612 cities within 39 countries over the period 1985-2019. ...In general, across most countries, the estimates of the excess mortality are very similar, with a global-level excess of 0.53% for heat, and 6.02% versus 6.25% for cold, from ground stations and ERA5-Land data, respectively (’Global in Fig. 5 and Table S3)....”

If there really is a concern for human health and extending life spans, there should be much more emphasis placed on reducing the costs of energy to heat homes, as well as minimizing exposure to cold temperatures.

Instead, the invariable focus is on the dangers of “climate change” or heat waves that put humanity at a tiny fraction of the risk that cold temperatures do.

Warmth saves lives. Cold kills. This has been true throughout human history, and it is no less true today.

Read more at No Tricks Zone

See also here how this is true in all regions.

Dec 04, 2024

Faddish, Ideological Energy Tries Can’t Beat Practical Tech

https://www.newsmax.com/politics/blackouts-kotek-wyoming/2024/12/04/id/1190347/

Make No Mistake, Reality Consistently Drives Reason Into Power Choices

At a time when campaigning politicians defy reality with extravagant promises, recent developments suggest reason may be returning to the electric power sector --- even as the Biden administration frantically tries to spend billions on “renewable energy.”

Much of this drama plays out in the Pacific Northwest.

There, policymakers favor faddish, ideological approaches to energy needs over practical technologies relying on fossil fuels, nuclear, or hydro.

One result has been the intrusion of expensive, unreliable, and environmentally damaging wind turbines on the beauty that makes the Northwest special.

Among those saving us from ourselves are native people, for whom the land is sacred. They recently forced the federal government and Gov. Tina Kotek, D-Ore., to cancel the sale of large offshore tracts for wind development.

Also playing a role were market realities.

Only a single, inexperienced company bid on the project.

Other competitors dropped out because offshore wind is financially risky, involving high costs and the hazards of a corrosive and stormy marine environment.

Besides, who wants intermittent power that costs more than it is worth?

No one.

Another ally in the fight for sanity is Microsoft co-founder Bill Gates, sometimes known for questionable schemes, such as blocking out the sun to cool Earth.

Sweden nixed that.

Nevertheless, Gates rightly has championed nuclear power, much maligned despite obvious advantages. In 2006, he founded TerraPower to develop an advanced breeder reactor that will power a plant in Kemmerer, Wyoming.

With one billion dollars from Gates, TerraPower broke ground in June.

The plant is designed to run 50 years without refueling.

In Pennsylvania, Microsoft, which Gates continues to advise, signed a 1.6-billion-dollar agreement to power data centers with 800 megawatts of nuclear power from Three Mile Island.

With the generating capacity of thousands of large wind turbines, TMI’s Unit 1 will provide power far more reliably than wind and solar.

TMI’s reopening would have been unthinkable just a few years ago.

Many now seem to have forgotten the partial meltdown of Unit 2 in 1979.

Or, they’ve come to understand that the risks of nuclear power can be managed --- just like the hazards (real or imagined) of other modern technologies.

Then there is Amazon, a company better known for its delivery of consumer goods than for its profitable data centers.

Eastern Oregon’s residents are familiar with Amazon’s large, dreary concrete buildings that have elaborate cooling equipment and large backup generators should utility power fail.

These hint at enormous power consumption.

Lured by Oregon’s generous tax breaks, Amazon and other web service providers like Google and Facebook built data centers to take advantage of the state’s cheap hydroelectric power.

However, the newcomers did not realize that public officials were inadequately planning for the increased power that new data centers would require.

This came at a time when politicians were also forcing electric utilities like Portland General Electric to switch to wind and solar while promoting an all-electric economy.

Data centers had to find reliable power and meet the ideological requirements of Oregon politicians. It did not work. The centers now need more electricity than the Oregon grid can supply.

Blackouts are a distinct possibility.

Although ideologically aligned with Oregon politicians, Amazon executives realized their very profitable data centers would fail if they kept posturing with renewable energy.

So, they took a bold step on Oct. 16, announcing that they will work with X-Energy to build small modular nuclear reactors to provide the power they need.

These will be set up, not in Oregon where nuclear power is essentially banned, but across the Columbia River in Washington State, near an existing nuclear plant.

Power can be easily shipped to Oregon.

Amazon announced that it is working with Energy Northwest, a consortium of 29 Washington State utilities on this nuclear project. This suggests that many Northwest utilities are finally acknowledging that the region will need great amounts of new and reliable power.

Thank you, Amazon, for promoting a solution to the looming Pacific Northwest power shortage. This may not save us from the massive rate increases that are beginning to hit consumers due to the renewable debacle.

But it may keep the lights on.

Oct 30, 2024

Hurricane facts vs. climate fiction

By Brian Sussman

Following two back-to-back hurricanes that severely pummeled the Southeastern United States, climate activists have swooped in like vultures, blaming political conservatives for the destruction wrought by Helene and Milton. At MSNBC, Chris Hayes spouts, “We have known for decades that our planet is warming and that we would start seeing the brutal effects. But conservatives remain so deep in their denial that they are flailing around for anyone or anything else to blame.”

While many attempt to falsely connect hurricanes to anthropogenic climate change, the truth is these monster storms are a natural and necessary function of our planet’s atmosphere. But that didn’t prevent CNN from posting a piece wildly declaring, “Helene was supercharged by ultra-warm water made up to 500 times more likely by global warming.”

Hurricane season in the Atlantic Ocean traditionally begins on June 1, as the equatorial waters warm to near 80 degrees Fahrenheit, the minimum temperature required for a hurricane to form. Water temperature is often considered the fuel for a hurricane because as the warm water evaporates it subsequently condenses within the storm releasing latent heat. However, there are a multitude of other factors that must be present for hurricane formation including a storm’s distance from the equator, light winds blowing into the center of the storm, high humidity values, and something we refer to as the “saturated adiabatic lapse rate” which is basically the rate at which saturated air cools with altitude. When all of these ingredients are in perfect sync, a hurricane begins to form.

Dr. Neil Frank, longtime head of the National Hurricane Center, contends the total number of hurricanes each year ebbs and flows in sixty-year cycles. On the average, each year there are ten tropical storms (wind speeds less than 74 mph) that develop over the Atlantic Ocean, Caribbean Sea, and Gulf of Mexico. Six of these storms become hurricanes (wind speeds of 74 mph or more). In an average three-year period, roughly five hurricanes strike the United States coastline, killing approximately 50 to 100 people anywhere from Texas to Maine. Of these, two are typically major hurricanes (winds greater than 110 mph).

The cover endorsement for my recent book, Climate Cult: Exposing and Defeating Their War on Life, Liberty and Property, was written by Dr. Frank. He contends there is no evidence suggesting we are seeing more hurricanes than ever (over the past 170 years of records), and he insists the frequency and intensity of hurricanes has not changed over years. Additionally, Dr. Frank reminds us that hurricanes are a beneficial component of the overall global atmosphere as they act as mechanisms which draw hot air from the earth’s equatorial regions into the jet stream which then transports the natural warmth to the colder latitudes. This allows for expansive and comfortable temperate zones, where most of us live.

But why do recent storms seem worse than ever? The answer is threefold.

First, there is no doubt property damage, in terms of dollars, is on the rise. This trend is driven by the continued development of expensive property along the coasts putting more value at risk of wind and water damage. Also, flooding has increased due to residential and commercial properties edging right up to the water’s edge. Under these modern circumstances, any given hurricane would cause more damage than it would have in the past. Sadly, the same could be said for the number of lives lost during these storms.

Second is media coverage. Back when I was presenting the weather for both CBS-TV News and KPIX-TV in San Francisco, content producers knew severe weather gains eyeballs. It is still true on TV today.

Third, there is the ad nauseam, agenda-driven propaganda put forth by activists attempting to pin their climate fiction hoax on deadly hurricanes.

But why is Florida seemingly often in the crosshairs?

Because the “Sunshine State” is a sitting duck. It’s a 500-mile long, 160-mile wide peninsula extending into the warm waters of the Atlantic and Gulf of Mexico with 1,146 miles of coastline and an average elevation of a mere 100-feet. Given that the average hurricane is about 300-miles-wide, the Florida peninsula is a prime target for potential disaster. As a result, during this 2024 season, of the nine hurricanes formed to date, four have hit the United States with two terribly striking Florida.

Brian Sussman is an award-winning meteorologist, former San Francisco radio talk host, and bestselling author.

.

|