The CO2 error is the root of the biggest scam in the history of the world, and has already bilked nations and citizens out of trillions of dollars, while greatly enriching the perpetrators. In the end, their goal is global Technocracy (aka Sustainable Development), which grabs and sequesters all the resources of the world into a collective trust to be managed by them. ⁃ TN Editor

The Intergovernmental Panel on Climate Change (IPCC) claim of human-caused global warming (AGW) is built on the assumption that an increase in atmospheric CO2 causes an increase in global temperature. The IPCC claim is what science calls a theory, a hypothesis, or in simple English, a speculation. Every theory is based on a set of assumptions. The standard scientific method is to challenge the theory by trying to disprove it. Karl Popper wrote about this approach in a 1963 article, Science as Falsification. Douglas Yates said,

“No scientific theory achieves public acceptance until it has been thoroughly discredited.”

Thomas Huxley made a similar observation.

“The improver of natural knowledge absolutely refuses to acknowledge authority, as such. For him, skepticism is the highest of duties; blind faith the one unpardonable sin.”

In other words, all scientists must be skeptics, which makes a mockery out of the charge that those who questioned AGW, were global warming skeptics. Michael Shermer provides a likely explanation for the effectiveness of the charge.

“Scientists are skeptics. It’s unfortunate that the word ‘skeptic’ has taken on other connotations in the culture involving nihilism and cynicism. Really, in its pure and original meaning, it’s just thoughtful inquiry.”

The scientific method was not used with the AGW theory. In fact, the exact opposite occurred, they tried to prove the theory. It is a treadmill guaranteed to make you misread, misrepresent, misuse and selectively choose data and evidence. This is precisely what the IPCC did and continued to do.

A theory is used to produce results. The results are not wrong, they are only as right as the assumptions on which they are based. For example, Einstein used his theory of relativity to produce the most famous formula in the world; e = mc2. You cannot prove it wrong mathematically because it is the end product of the assumptions he made. To test it and disprove it, you challenge one or all of the assumptions. One of these is represented by the letter “c” in the formula, which assumes nothing can travel faster than the speed of light. Scientists challenging the theory are looking for something moving faster than the speed of light.

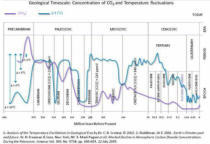

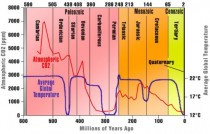

The most important assumption behind the AGW theory is that an increase in global atmospheric CO2 will cause an increase in the average annual global temperature. The problem is that in every record of temperature and CO2, the temperature changes first. Think about what I am saying. The basic assumption on which the entire theory that human activity is causing global warming or climate change is wrong. The questions are how did the false assumption develop and persist?

The answer is the IPCC needed the assumption as the basis for their claim that humans were causing catastrophic global warming for a political agenda. They did what all academics do and found a person who gave historical precedence to their theory. In this case, it was the work of Svante Arrhenius. The problem is he didn’t say what they claim. Anthony Watts’ 2009 article identified many of the difficulties with relying on Arrhenius. The Friends of Science added confirmation when they translated a more obscure 1906 Arrhenius work. They wrote,

Much discussion took place over the following years between colleagues, with one of the main points being the similar effect of water vapour in the atmosphere which was part of the total figure. Some rejected any effect of CO2 at all. There was no effective way to determine this split precisely, but in 1906 Arrhenius amended his view of how increased carbon dioxide would affect climate.

The issue of Arrhenius mistaking a water vapor effect for a CO2 effect is not new. What is new is that the growing level of empirical evidence that the warming effect of CO2, known as climate sensitivity, is zero. This means Arrhenius colleagues who “rejected any effect of CO2 at all” are correct. In short, CO2 is not a greenhouse gas.

The IPCC through the definition of climate change given them by the United Nations Framework Convention on Climate Change (UNFCCC) were able to predetermine their results.

a change of climate which is attributed directly or indirectly to human activity that alters the composition of the global atmosphere and which is in addition to natural climate variability observed over considerable time periods.

This allowed them to only examine human-causes, thus eliminating almost all other variables of climate and climate change. You cannot identify the human portion if you don’t know or understand natural, that is without human, climate or climate change. IPCC acknowledged this in 2007 as people started to ask questions about the narrowness of their work. They offered the one that many people thought they were using and should have been using. Deceptively, it only appeared as a footnote in the 2007 Summary for Policymakers (SPM), so it was aimed at the politicians. It said,

“Climate change in IPCC usage refers to any change in climate over time, whether due to natural variability or as a result of human activity. This usage differs from that in the United Nations Framework Convention on Climate Change, where climate change refers to a change of climate that is attributed directly or indirectly to human activity that alters the composition of the global atmosphere and that is in addition to natural climate variability observed over comparable time periods.”

Few at the time challenged the IPCC assumption that an increase in CO2 caused an increase in global temperature. The IPCC claimed it was true because when they increased CO2 in their computer models, the result was a temperature increase. Of course, because the computer was programmed for that to happen. These computer models are the only place in the world where a CO2 increase precedes and causes a temperature change. This probably explains why their predictions are always wrong.

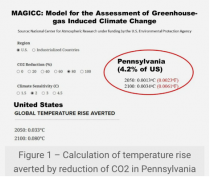

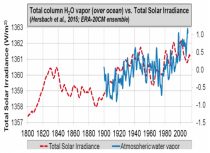

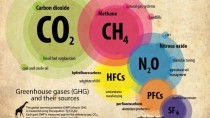

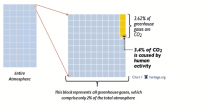

An example of how the definition allowed the IPCC to focus on CO2 is to consider the major greenhouse gases by name and percentage of the total. They are water vapour (H20) 95%, carbon dioxide (CO2) 4%, and methane (CH4) 0.036%. The IPCC was able to overlook water vapor (95%) by admitting humans produce some, but the amount is insignificant relative to the total atmospheric volume of water vapour. The human portion of the CO2 in the atmosphere is approximately 3.4% of the total CO2 (Figure 1) To put that in perspective, approximately a 2% variation in water vapour completely overwhelms the human portion of CO2. This is entirely possible because water vapour is the most variable gas in the atmosphere, from region to region and over time.

In 1999, after two IPCC Reports were produced in 1990 and 1995 assuming a CO2 increase caused a temperature increase, the first significant long term Antarctic ice core record appeared. Petit, Raynaud, and Lorius were presented as the best representation of levels of temperature, CO2, and deuterium over 420,000-years. It appeared the temperature and CO2 were rising and falling in concert, so the IPCC and others assumed this proved that CO2 was causing temperature variation. I recall Lorius warning against rushing to judgment and saying there was no indication of such a connection.

Euan Mearns noted in his robust assessment that the authors believed that temperature increase preceded CO2 increase.

In their seminal paper on the Vostok Ice Core, Petit et al (1999) [1] note that CO2 lags temperature during the onset of glaciations by several thousand years but offer no explanation. They also observe that CH4 and CO2 are not perfectly aligned with each other but offer no explanation. The significance of these observations are therefore ignored. At the onset of glaciations temperature drops to glacial values before CO2 begins to fall suggesting that CO2 has little influence on temperature modulation at these times.

Lorius reconfirmed his position in a 2007 article.

“our [East Antarctica, Dome C] ice core shows no indication that greenhouse gases have played a key role in such a coupling [with radiative forcing]”

Despite this, those promoting the IPCC claims ignored the empirical evidence. They managed to ignore the facts and have done so to this day. Joanne Nova explains part of the reason they were able to fool the majority in her article, “The 800 year lag in CO2 after temperature - graphed.” when she wrote confirming the Lorius concern.

“It’s impossible to see a lag of centuries on a graph that covers half a million years, so I have regraphed the data from the original sources...”

Nova concluded after expanding and more closely examining the data that,

The bottom line is that rising temperatures cause carbon levels to rise. Carbon may still influence temperatures, but these ice cores are neutral on that. If both factors caused each other to rise significantly, positive feedback would become exponential. We’d see a runaway greenhouse effect. It hasn’t happened. Some other factor is more important than carbon dioxide, or carbon’s role is minor.

Al Gore knew the ice core data showed temperature changing first. In his propaganda movie, An Inconvenient Truth he separated the graph of temperature and CO2 enough to make a comparison of the two graphs more difficult. He then distracted with Hollywood histrionics by riding up on a forklift to the distorted 20th century reading.

Thomas Huxley said,

“The great tragedy of science- the slaying of a lovely hypothesis by an ugly fact.”

The most recent ugly fact was that after 1998 CO2 levels continued to increase but global temperatures stopped increasing. Other ugly facts included the return of cold, snowy winters creating a PR problem by 2004. Cartoons appeared (Figure 2.)

The people controlling the AGW deception were aware of what was happening. Emails from 2004 leaked from the University of East Anglia revealed the concern. Nick at the Minns/Tyndall Centre that handled publicity for the climate story said,

“In my experience, global warming freezing is already a bit of a public relations problem with the media.”

Swedish climate expert on the IPCC Bo Kjellen replied,

“I agree with Nick that climate change might be a better labelling than global warming.”

The disconnect between atmospheric CO2 levels and global temperatures continued after 1998. The level of deliberate blindness of what became known as the “pause” or the hiatus became ridiculous (Figure 3).

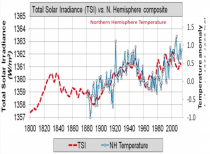

The assumption that an increase in CO2 causes an increase in temperature was incorrectly claimed in the original science by Arrhenius. He mistakenly attributed the warming caused by water vapour (H2O) to CO2. All the evidence since confirms the error. This means CO2 is not a greenhouse gas. There is a greenhouse effect, and it is due to the water vapour. The entire claim that CO and especially human CO2 is absolutely wrong, yet these so-called scientists convinced the world to waste trillions on reducing CO2. If you want to talk about collusion, consider the cartoon in Figure 4.

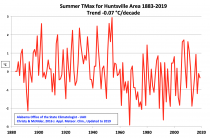

It has been an up and down year but we are thankful for our many blessings. Thank you for your much needed and appreciated support in 2019. 2020 is a very critical year in our effort to get the science back on the right track. The AMS and AGU are celebrating their 100th Anniversary but after decades of laudable work, they have with off the rails climate folks at NOAA and NASA are presenting dodgy attribution work to keep the money train on the track which endangers our energy dominance/affordability and near full employment. Many companies, even energy and auto, and politicians (democrats and some republicans) are pushing some form of carbon taxes if not abandonment of fossil fuels with plan for unreliable renewables.

Icecap has been here now for 13 years - with 122 million page hits. Be sure to use the Search Icecap function to find previous posts on any topic.

See also our sister sites:

Alarmist Claim Research is a site where a team of scientists has fact checked the 12 common alarmist claims. Be sure to click on the link to the detailed write-ups after the summaries with more detail and graphics here.

THSResearch which started with an analysis of the failed GHG climate models projection of the Tropical Hotspot here and the GAST report has been followed by many more analyses here.

Then there is a site developed for a roommate engineer at Wisconsin - mainly focused on technology and energy issues (here).

Of course, my focus has been on Weatherbell.com since 2011 - with daily posts on weather and climate and commercial posts and video. We have an excellent model and video service for weather enthusiasts.

------------

Here is a holiday story:

It’s that time of year for favorite old holiday movies

The movie “It’s a Wonderful Life”, like A Christmas Story are perennial favorites.

It’s a Wonderful Life was produced by Frank Capra who produced many classic movies. The snow scenes look like upstate New York or New England in winter.

It may surprise you that the Bedford Falls set was in warm and sunny California: It covered four acres of the RKO ranch [in Encino, Calif.] and included 75 stores and buildings, a tree-lined center parkway with 20 fully grown oak trees, a factory district and residential areas. Main Street was 300 yards long, or three full-length city blocks.

The snow was fake snow. Frank Capra -who trained as an engineer - and special effects supervisor Russell Shearman engineered a new type of artificial snow for the film. At the time, painted corn flakes were the most common form of fake snow, but they posed a bit of an audio problem for Capra. So he and Shearman opted to mix foamite (the stuff you find in fire extinguishers) with sugar and water to create a less noisy option.

Here were the fans that blew the snow over the set.

Watch animation.

The film was done in the summer I was born and filming at times occurred during a heat wave, which forced them to shut down for days. Though they were romping in what looked like snow, they were perspiring.

Though not popular in its day, the movie became a holiday favorite on TV and ranked number one on its list of the most inspirational American films of all time.

One more note: when I was watching It’s a Wonderful Life last week and saw someone playing the rent collector for Potter (Lionel Barrymore) who looked very familiar.

It was Charles Lane - here was a photo of him.

Charles Lane was an amazing man who appeared in 250 movies over 80 years and lived to 102 years of age!!!! He is very much the actor extraordinaire. See the incredible list here

It occurred to me, he was the spitting image of John Podesta, Clinton and Obama advisor.

Exposing the lies continues and has now breached the walls of the journal Nature.

Ocean acidification does not impair the behaviour of coral reef fishes (link).

Also

CO2 Emissions, Fossil Fuel Use and Human Longevity (Uploaded 9 January 2020)

by Dr. Craig Idso, CO2 Science

This video segment discusses the fundamental link between CO2 emissions and global life expectancy. It is patently false and borderline fraudulent to claim rising CO2 is enhancing human mortality rates, as many often do, when the data clearly demonstrate there are more people on earth today who are living longer and better lives because of rising CO2 and fossil fuel use…

Read a transcript of this video.

-----------

The Toxic Rhetoric of Climate Change

“I genuinely have the fear that climate change is going to kill me and all my family, I’m not even kidding it’s all I have thought about for the last 9 months every second of the day. It’s making my sick to my stomach, I’m not eating or sleeping and I’m getting panic attacks daily. It’s currently 1 am and I can’t sleep as I’m petrified. - Young adult in the UK

Letter from a worried young adult in the UK

I received this letter last nite, via email:

“I have no idea if this is an accurate email of your but I just found it and thought I’d take a chance. My name is Elan I’m 20 years old from the UK. I have been well the only word to describe it is suffering as I genuinely have the fear that climate change is going to kill me and all my family, I’m not even kidding it’s all I have thought about for the last 9 months every second of the day. It’s making my sick to my stomach, I’m not eating or sleeping and I’m getting panic attacks daily. It’s currently 1am and I can’t sleep as I’m petrified. I’ve tried to do my own research, I’ve tried everything. I’m not stupid, I’m a pretty rational thinker but at this point sometimes I literally wish I wasn’t born, I’m just so miserable and Petrified. I’ve recently made myself familiar with your work and would be so appreciative of any findings you can give me or hope or advice over email. I’m already vegetarian and I recycle everything so I’m really trying. Please help me. In anyway you can. I’m at my wits end here.”

JC’s response

We have been hearing increasingly shrill rhetoric from Extinction Rebellion and other activists about the ‘existential threat’ of the ‘climate crisis’, ‘runaway climate chaos’, etc. In a recent op-ed, Greta Thunberg stated: “Around 2030 we will be in a position to set off an irreversible chain reaction beyond human control that will lead to the end of our civilization as we know it.” From the Extinction Rebellion: “It is understood that we are facing an unprecedented global emergency. We are in a life or death situation of our own making.”

It is more difficult tune out similar statements from responsible individuals representing the United Nations. In his opening remarks for the UN Climate Change Conference this week in Madrid (COP25), UN Secretary General Antonio Guterres said that “the point of no-return is no longer over the horizon.” Hoesung Lee, the Chair for the Intergovernmental Panel on Climate Change (IPCC), said “if we stay on our current path, [we] threaten our existence on this planet.”

So… exactly what should we be worried about? Consider the following statistics:

Over the past century, there has been a 99% decline in the death toll from natural disasters, during the same period that the global population quadrupled.

While global economic losses from weather and climate disasters have been increasing, this is caused by increasing population and property in vulnerable locations. Global weather losses as a percent of global GDP have declined about 30% since 1990.

While the IPCC has estimated that sea level could rise by 0.6 meters by 2100, recall that the Netherlands adapted to living below sea level 400 years ago.

Crop yields continue to increase globally, surpassing what is needed to feed the world. Agricultural technology matters more than climate.

The proportion of world population living in extreme poverty declined from 36% in 1990 to 10% in 2015.

While many people may be unaware of this good news, they do react to each weather or climate disaster in the news. Activist scientists and the media quickly seize upon each extreme weather event as having the fingerprints of manmade climate change - ignoring the analyses of more sober scientists showing periods of even more extreme weather in the first half of the 20th century, when fossil fuel emissions were much smaller.

So . . . why are we so worried about climate change? The concern over climate change is not so much about the warming that has occurred over the past century. Rather, the concern is about what might happen in the 21st century as a result of increasing fossil fuel emissions. Emphasis on ‘might.’

Alarming press releases are issued about each new climate model projection that predicts future catastrophes from famine, mass migrations, catastrophic fires, etc. However these alarming scenarios of the 21st century climate change require that, like the White Queen in Alice and Wonderland, we believe ‘six impossible things before breakfast’.

The most alarming scenarios of 21st century climate change are associated with the RCP8.5 greenhouse gas concentration scenario. Often erroneously described as a ‘business as usual’ scenario, RCP8.5 assumes unrealistic trends long-term trends for population and a slowing of technological innovation. Even more unlikely is the assumption that the world will largely be powered by coal.

In spite of the implausibility of this scenario, RCP8.5 is the favored scenario for publications based on climate model simulations. In short, RCP8.5 is a very useful recipe for cooking up scenarios alarming impacts from manmade climate change. Which are of course highlighted and then exaggerated by press releases and media reports.

Apart from the issue of how much greenhouse gases might increase, there is a great deal of uncertainty about much the planet will warm in response to a doubling of atmospheric carbon dioxide - referred to as ‘equilibrium climate sensitivity’ (ECS). The IPCC 5th Assessment Report (2013) provided a range between 1 and 6C, with a ‘likely’ range between 1.5 and 4.5C.

In the years since the 5th Assessment Report, the uncertainty has grown. The latest climate model results - prepared for the forthcoming IPCC 6th Assessment Report - shows that a majority of the climate models are producing values of ECS exceeding 5C. The addition of poorly understood additional processes into the models has increased confusion and uncertainty. At the same time, refined efforts to determine values of the equilibrium climate sensitivity from the historical data record obtain values of ECS about 1.6C, with a range from 1.05 to 2.7C.

With this massive range of uncertainty in the values of equilibrium climate sensitivity, the lowest value among the climate models is 2.3C, with few models having values below 3C. Hence the lower end of the range of ECS is not covered by the climate models, resulting in temperature projections for the 21st century that are biased high, with a smaller range relative to the range of uncertainty in ECS.

With regards to sea level rise, recent U.S. national assessment reports have included a worst-case sea level rise scenario for the 21st century of 2.5 m. Extreme estimates of sea level rise rely on RCP8.5 and climate model simulations that are on average running too hot relative to the uncertainty range of ECS. The most extreme scenarios of 21st century sea level rise are based on speculative and poorly understood physical processes that are hypothesized to accelerate the collapse of the West Antarctic Ice Sheet. However, recent research indicates that these processes are very unlikely to influence sea level rise in the 21st century. To date, in most of the locations that are most vulnerable to sea level rise, local sinking from geological processes and land use has dominated over sea level rise from global warming.

To further complicate climate model projections for the 21st century, the climate models focus only on manmade climate change- they make no attempt to predict natural climate variations from the sun’s output, volcanic eruptions and long-term variations in ocean circulation patterns. We have no idea how natural climate variability will play out in the 21st century, and whether or not natural variability will dominate over man made warming.

We still don’t have a realistic assessment of how a warmer climate will impact us and whether it is ‘dangerous.’ We don’t have a good understanding of how warming will influence future extreme weather events. Land use and exploitation by humans is a far bigger issue than climate change for species extinction and ecosystem health.

We have been told that the science of climate change is ‘settled’. However, in climate science there has been a tension between the drive towards a scientific ‘consensus’ to support policy making, versus exploratory research that pushes forward the knowledge frontier. Climate science is characterized by a rapidly evolving knowledge base and disagreement among experts. Predictions of 21st century climate change are characterized by deep uncertainty.

As noted in a recent paper co-authored by Dr. Tim Palmer of Oxford University, there is “deep dissatisfaction with the ability of our models to inform society about the pace of warming plays out regionally, and what it implies for the likelihood of surprises."Unfortunately, [climate scientists] circling the wagons leads to false impressions about the source of our confidence and about our ability to meet the scientific challenges posed by a world that we know is warming globally.”

We have not only oversimplified the problem of climate change, but we have also oversimplified its ‘solution’. Even if you accept the climate model projections and that warming is dangerous, there is disagreement among experts regarding whether a rapid acceleration away from fossil fuels is the appropriate policy response. In any event, rapidly reducing emissions from fossil fuels to ameliorate the adverse impacts of extreme weather events in the near term increasingly looks like magical thinking.

Climate change - both manmade and natural - is a chronic problem that will require continued management over the coming centuries.

We have been told that climate change is an ‘existential crisis.’ However, based upon our current assessment of the science, the climate threat is not an existential one, even in its most alarming hypothetical incarnations. However, the perception of manmade climate change as a near-term apocalypse and has narrowed the policy options that we’re willing to consider. The perceived ‘urgency’ of drastically reducing fossil fuel emissions is forcing us to make near term decisions that may be suboptimal for the longer term. Further, the monomaniacal focus on elimination of fossil fuel emissions distracts our attention from the primary causes of many of our problems that we might have more success in addressing in the near term.

Common sense strategies to reduce vulnerability to extreme weather events, improve environmental quality, develop better energy technologies and increase access to grid electricity, improve agricultural and land use practices, and better manage water resources can pave the way for a more prosperous and secure future. Each of these solutions is ‘no regrets’- supporting climate change mitigation while improving human well being. These strategies avoid the political gridlock surrounding the current policies and avoid costly policies that will have minimal near-term impacts on the climate. And finally, these strategies don’t require agreement about the risks of uncontrolled greenhouse gas emissions.

We don’t know how the climate of the 21st century will evolve, and we will undoubtedly be surprised. Given this uncertainty, precise emissions targets and deadlines are scientifically meaningless. We can avoid much of the political gridlock by implementing common sense, no-regrets strategies that improve energy technologies, lift people out of poverty and make them more resilient to extreme weather events.

The extreme rhetoric of the Extinction Rebellion and other activists is making political agreement on climate change policies more difficult. Exaggerating the dangers beyond credibility makes it difficult to take climate change seriously. On the other hand, the extremely alarmist rhetoric has frightened the bejesus out of children and young adults.

JC message to children and young adults: Don’t believe the hype that you are hearing from Extinction Rebellion and the like. Rather than going on strike or just worrying, take the time to learn something about the science of climate change. The IPCC reports are a good place to start; for a critical perspective on the IPCC, Climate Etc. is a good resource.

Climate change manmade and/or natural along with extreme weather events, provide reasons for concern. However, the rhetoric and politics of climate change have become absolutely toxic and nonsensical.

In the meantime, live your best life. Trying where you can to lessen your impact on the planet is a worthwhile thing to do. Societal prosperity is the best insurance policy that we have for reducing our vulnerability to the vagaries of weather and climate.

JC message to Extinction Rebellion and other doomsters: Not only do you know nothing about climate change, you also appear to know nothing of history. You are your own worst enemy - you are triggering a global backlash against doing anything sensible about protecting our environment or reducing our vulnerability to extreme weather. You are making young people miserable, who haven’t yet experienced enough of life to place this nonsense in context.

November 22, 2019/ Francis Menton

If you get most of your news passively by just reading what comes up in some kind of Facebook or Google feed or equivalent, you probably have the impression that the Climate Wars are over and the Climate Campaigners have swept the field of battle.

In my case, I certainly don’t rely on those kinds of toxic sources of information, but I do regularly monitor many of the media sources in the “mainstream” category - the New York Times, the Washington Post, Bloomberg, the Economist, Politico, and several of the television networks like CBS, ABC, NBC and CNN. All of those (and plenty more) have clearly put an absolute ban on any news or information that would cast even the slightest negative light on the proposition that there is an imminent “climate crisis” that must be solved by government transformation of the world economy.

I’ll give a couple of examples of the lengths to which this has gone. Back in September, mentally unstable Swedish teenager Greta Thunberg, whose only qualification was her ignorant passion for climate extremism, got the platform of the UN “Climate Action Summit” for a big speech. Excerpt:

You have stolen my dreams and my childhood with your empty words. And yet I’m one of the lucky ones. People are suffering. People are dying. Entire ecosystems are collapsing. We are in the beginning of a mass extinction, and all you can talk about is money and fairy tales of eternal economic growth. How dare you!

You would think that sane people would want to stay as far from Greta as possible lest they get accused of child abuse. But instead, Greta is feted as a heroine. In October something called the Nordic Council awarded young Greta its 2019 Environmental Award. (It seems that she has rejected the award, thus claiming for herself an even higher level of holiness among true believers.)

Meanwhile, over in Germany, a German think tank called the European Institute for Climate and Energy (EIKE in the German acronym) planned to hold a climate conference this past weekend at a hotel called the NH in Munich. From NoTricksZone November 19:

According to EIKE spokesman, Prof. Horst-Joachim Ludecke, “a left-green mob” pressured the hotel management of the NH Congress Center in Munich (Aschheim) “to illegally cancel the accommodation contract”.

Apparently, the unforgivable sin of EIKE was allowing some scientists from the skeptic camp to appear and speak at their conference. EIKE went to court to try to get an injunction against the last-minute cancelation of their contract, but the German court upheld the cancelation on the ground that “security” concerns trumped free speech. NTZ indicates in an update that the conference was able to find an alternative location at the last minute and to proceed; but of course, the last-minute change of venue and secret location were huge negatives in trying to get any publicity for the conference.

So the very last vestiges of dissent are in the process of getting stamped out. Surely then, the transformation of the world economy and of its use of energy cannot be stopped.

Actually, out there in the world, reality continues to trump hysteria. Do you remember reports from a couple of years ago that China was ceasing to develop fossil fuel power and was becoming a “climate leader” by going all in for trendy renewables wind and solar? Well, that was to fool the dopes. Just this month, something called Global Energy Monitor is out with a new report on what’s going on on the ground in China. Bottom line: 148 gigawatts of coal-fired capacity under active construction or with construction being resumed after suspension. The Global Energy Monitor people (who seem to be associated with the End Coal campaign) could not be more horrified:

[A] permitting spree [from 2014 to 2016] brought a cohort of 245 GW of new projects nearly equivalent to the U.S. coal fleet (254 GW) into the developmental pipeline, inflating what was already an overbuilt coal power fleet, with the average running hours for China’s coal plants hovering around 50% since 2015. Today, 147.7 GW of coal plants are either under active construction or under suspension and likely to be revived - an amount nearly equal to the existing coal power capacity of the European Union (150 GW)… Coal and power industry groups are proposing the central government increase total coal power capacity by 20 to 40% to between 1,200 and 1,400 GW as part of China’s 2035 infrastructure plan.

At 1400 GW of coal power capacity, China would be closing in on 6 times U.S. coal power capacity. Why again are we bothering with this whole decarbonization thing? (H/t Global Warming Policy Foundation)

And over in Germany, the fantasy that wind power can be competitive with fossil fuel power also keeps running into the wall of the real world. Der Spiegel reported on November 19 that the end of certain subsidies, along with opposition from local environmentalists who don’t want forests of ugly wind turbines in their localities, has put the German (and European) wind industry in “free fall”.

The manufacturers of turbines and solar panels are dropping like flies, as subsidies are rolled back across Europe. So-called ‘green’ jobs are a case of easy come, easy go. The wind and solar ‘industries’ that gave birth to those jobs simply can’t survive without massive and endless subsidies, which means their days are numbered. With the axe being taken to subsidies across the globe, their ultimate demise is a matter of when, not if. The wind back in subsidies across Europe has all but destroyed the wind industry: in Germany this year a trifling 35 onshore wind turbines have been erected, so far. Twelve countries in the European Union (EU) failed to install “a single wind turbine” last year.

Meanwhile, fracking in the U.S. continues to keep supplies of oil and gas plentiful, and prices reasonable. Petrostates like Russia, Venezuela, Iran and Saudi Arabia are on the run. So who is really winning the climate wars?

----------------------

Nobody Will Stop Africa From Developing Its Fossil Fuel Resources

November 09, 2019/ Francis Menton

In prior posts where I have addressed the futility of jurisdictions in the U.S. trying to “save the planet” by reducing their use of fossil fuels, my focus has generally been on China and India. Those countries have huge populations (about 1.4 billion each) and still-poorly-developed energy infrastructure. Of course they are going to continue to build power plants until everybody has access to reliable electricity. And of course they are going to make use of coal, oil and natural gas, because those fossil fuels provide the cheapest and most reliable energy. The ongoing increase in emissions from China and India as they build out their electricity systems and as their people acquire automobiles regularly swamps any minor emissions reductions that any jurisdictions in the U.S. can achieve.

But let us also not overlook Africa. Africa’s population is currently about 1.3 billion, but growing much faster than that of China or India. The UN projects a population for Africa of 2.5 billion for 2050, and 4 billion for 2100. Meanwhile, close to half of the current 1.3 billion Africans lack access to electricity; and that number will only grow rapidly in the absence of rapid buildout of an electrical grid throughout the continent.

You may have seen predictions in certain quarters that Africa is going to “go green” as it gains access to energy. But what is the reality on the ground? We can get a good indication by looking at what happened last week at the Africa Oil Week convention, held this year in Cape Town, South Africa. Reuters had a report on the event, with the headline “No apologies: Africans say their need for oil cash outweighs climate concerns.”

It seems that the Africa Oil Week convention was attended by representatives of some 75 countries, including 23 energy ministers. According to Reuters, unlike the scene at similar confabs held in Europe, at this one pretty much no one gave a hoot about the issue of “climate change”.

The tension keenly felt at oil conferences in Europe was largely absent over the three-day event in Cape Town; there was little focus on climate change…

One after another, delegates interviewed by Reuters stated that they were not going to let non-Africans buffalo them into not developing their fossil fuel resources. Examples:

From Gabriel Obiang Lima, energy minister of Equatorial Guinea: “Under no circumstances are we going to be apologizing [for developing our fossil fuel resources]… Anybody out of the continent saying we should not develop those [oil] fields, that is criminal.”

From Gwede Mantashe, energy minister of South Africa: “Energy is the catalyst for growth… [Environmentalists] even want to tell us to switch off all the coal-generated power stations,” he said. “Until you tell them, ‘you know we can do that, but you’ll breathe fresh air in the darkness’.”

From Noel Mboumba, hydrocarbons minister of Gabon: “Oil is a major driver of development. We will do all in our power to develop it.”

Meanwhile, Africa News has a roundup from the same convention of various countries announcing plans to move forward on fossil fuel development and investment projects:

Senegal’s Oil and Energy Minister Mahamadou Makhtar Cisse used the platform to launch… a licensing round of three blocks of sediment basin.

Angola’s newly formed national oil, gas and biofuels agency, ANGP, announced that the country has formed a consortium with five international oil companies, including Eni and Chevron, to develop liquefied natural gas (LNG)…

Uganda is highlighting the ongoing second licensing round for oil exploration, which covers five highly prospective blocks with relatively good seismic and other data, Minister [Irene] Muloni said…

Ghana told AOW delegates that plans, revising its laws on oil and gas licenses, sent to parliament last week, are an effort to spur production…

Chairman of Mozambique’s upstream regulator, INP, Carlos Zacarias announced that the country’s long-awaited sixth licensing round is due to be launched early next year.

Somalian Minister of Petroleum and Mineral Resources Abdirashid Mohamed Ahmed said his country was embarked on a path to transform Somalia’s petroleum industry and attract the attention of new investors…

Even as the Oil Week convention proceeded, outside the hotel, a small group of protesters from Extinction Rebellion did their thing, labeling the event a “Climate Criminals Conference.” Here is a photograph from Reuters:

Notice that all of the protesters are white. Their objective is to keep the poor poor. Somehow they have convinced themselves that they have the moral high ground.

By Joseph D’Aleo, CCM

Many energy companies, auto and other corporations are increasing their support of the decarbonization programs and policies (including taxes, mandated reduction of our use of fossil fuels, pushing not ready for prime time alternatives). This has proved to be a disaster in all countries that followed this unwise radical enviro track.

See how a 2014 senate report showed there is a left wing billionaires club which has used environmentalism to control the economy and subvert democracy here. We are working to educate the increasingly compliant organizations and key politicians at all levels. We get no help from the media which refuses to cover any alternative views on this critical issue. All our efforts (posts, editorials, LTEs, videos, talks (science teachers, taxpayers association, state legislators, Rotary Clubs, institutes), peer reviewed reports like this) have been done pro-bono. Attacks on us have always included claims we were funded by big oil and the Koch Brothers. I wish. If you can, please support our efforts with a donation (secure PAYPAL button on the left).

-------------------------

CO2 - THE GAS OF LIFE

CO2 is a beneficial trace gas (0.04% of our atmosphere). With every breath we emit out 100 times more CO2 than we breathe in so it is not harmful. The increase in CO2 has caused a significant greening of the earth, with increased crop yields feeding more people at lower cost.

Dr. Craig Idso of CO2 Science noted recently “Carbon dioxide is not a pollutant and it is most certainly not causing dangerous global warming. Rather, its increase in the atmosphere is invigorating the biosphere, producing a multitude of benefits for humanity and the natural world, notwithstanding the prognostications of the uninformed.” Dr. Will Happer, Princeton Physicist says we are coming out of a CO2 drought and humanity would benefit from CO2 being 2 to 3 times higher.

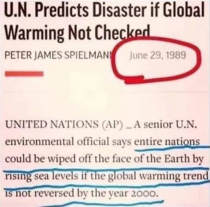

It’s not the first time we were told we faced an existential threat due to climate change. In 1970, Stanford’s Paul Ehrlich warned that because of population growth, climate stress (then cold) and dwindling energy that between 1980 and 1989, some 4 billion people, including 65 million Americans, would perish in the “Great Die-Off” which was too late to stop. Even as each subsequent dire forecast failed (see how the alarmist/media record is perfect (100% wrong) in the 50 major claims made since 1950 here), the alarms continued, each pushing the date forward - 2000, 2020, and now 2030. This summer at Glacier National Park signs “Warning: glaciers will be gone by 2020” were quietly removed as ice and snow increased.

A team of scientific experts evaluated the 12 most commonly reported claims and found them all unfounded - see .

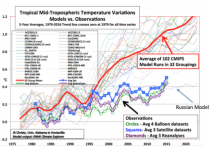

The climate models used to predict the future have all failed miserably.

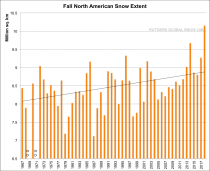

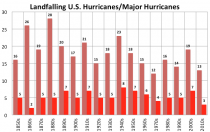

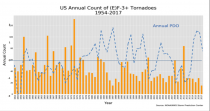

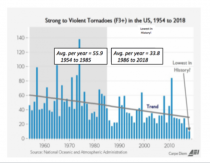

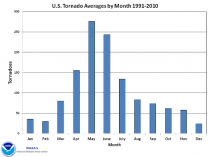

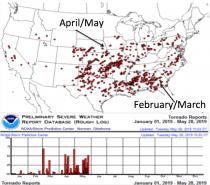

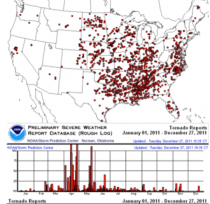

Heat records have declined since the 1930s, which holds 23 of the 50 state hottest ever temperature records. This was the second quietest decade for landfalling hurricanes and major hurricanes since 1850. This was the quietest decade for tornadoes since tracking began in the 1950s. Sea level rises have slowed to 4 inches/century globally. Arctic ice has tracked with the 60-year ocean cycles and is similar to where it was in the 1920s to 1950s. NOAA could find no evidence of increased frequency of floods and droughts (this spring had the smallest % of US in drought on record). Snow which the university scientists here predicted would disappear, actually has set new records (fall and winter) for the hemisphere and North America, and both Boston and NYC have had more snow in the 10 years ending 2018 than any other 10 year period back to the late 1880s. Wildfires cause havoc but were far more prevalent before the forest management, fire suppression and grazing of the 1900s. They are problems now because more have left the failing cities to move out of state or to the beauty of the foothills. The power lines to service them can spark new fires when the cold air rushes through the mountain passes this time of years downing trees onto the power lines.

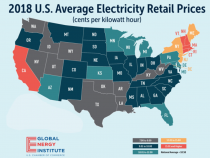

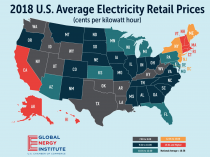

In the U.S., with low cost energy, lowered taxes and elimination of unnecessary regulations, we now have the lowest unemployment for the nation in decades or history and for the first time in a long time significant wage increases! Here in NH, we have the lowest unemployment in the nation. The U.S. is energy independent, a long time thought unachievable goal. Our air and water is cleanest in our lifetimes well below the tough standards we put in place decades ago.

The real existential threat comes would come from radical environmentalism and the prescribed remedies. The scare is politically driven, all about big government and control over every aspect of our lives. AOC’s chief of staff Saikat Chakrabarti in May admitted that the Green New Deal was not conceived as an effort to deal with climate change, but instead a “how-do-you-change-the-entire economy thing” - nothing more than a thinly veiled socialist takeover of the U.S. economy. He was echoing what the climate change head of the UN climate chief and the UN IPCC Lead Author said - that is was our best chance to change the economic system (to centralized control) and redistribute wealth (socialism).

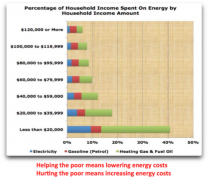

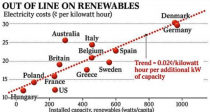

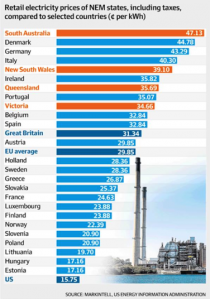

The economy in every country that has moved down an extreme green path have seen skyrocketing energy costs - 3 times our levels.

Renewables are unreliable as the wind doesn’t always blow nor the sun shine. And don’t believe the claims millions of green jobs would result. In Spain, every green job created cost Spain $774,000 in subsidies and resulted in a loss of 2.2 real jobs. Only 1 in 10 green jobs were permanent. Industry left and in Spain unemployment rose to 27.5%.

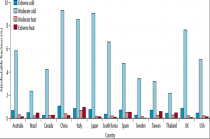

Many households in the countries that have gone green are said to be in “energy poverty” (25% UK, 15% Germany). The elderly are said in winter to be forced to “choose between heating and eating”. Extreme cold already kills 20 times more than heat according to a study of 74 million deaths in 13 countries.

Politicians in the northeast states are bragging that they stopped the natural gas pipeline, shut down nuclear and coal plants and blocked the northern Pass which would have delivered low cost hydropower from Canada. In Concord, they are now scurrying to try and explain why electricity prices are 50 to 60% higher than the national average here and are speculating they have not moved fast enough with wind and solar. Several states have even established zero carbon emissions. This will lead to soaring energy prices and life-threatening blackouts. For a family of 4 in a modest house with 3 cars, the energy costs could increase over $10,000/year (based on a sample of households and their energy costs multiplied by 3 as has occurred in countries with a onerous green agenda). And by the way like in Europe where this plan was enacted, many will lose their jobs. They are being told what (if) they can drive and what they can eat.

Prosperity always delivers a better life AND environment than poverty.

“If you don’t know where you are going, you might end up somewhere else.”Yogi Berra

Thank you for supporting ICECAP. We present the opinions of many real scientists which are not driven by profit motive but the truth. We are all sincerely worried about the policies that are based on political motivations and not science that could not only destroy prosperity so many have worked hard to achieve but also prevent the have-nots from becoming self sufficient and live more productive and fulfilling lives. You see elitists and politicians recognize if people are self sufficient, they lose their power and control. Along the same lines, we worry about our failing education system. Instead of taxing hard working taxpayers to help our young people pay off college loan debt for useless degrees, perhaps we need to employ class action suits to go after universities and administrators, overpaid faculty and their bloated endowments.

By Joseph D’Aleo, CCM

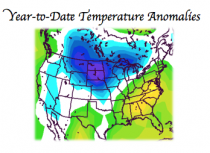

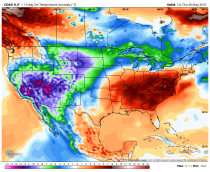

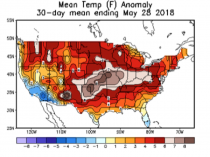

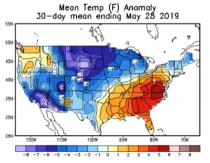

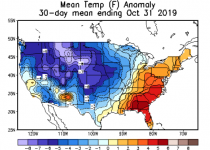

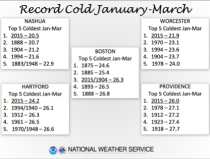

Starting in January 2019, unusual and at times record cold has been locked in over the north central states.

Enlarged

Though there was heat in late summer in the southeast and eastern Gulf to the Mid-Atlantic, the cold held in the north central. After a very cold spring with late snows, which significantly delayed or prevented grain planting, a cool summer followed and gave way to a very early cold shot in late September that brought early deep freezes and even record snows in the north central leading to significant crop losses.

There have been 90 all-time record lows versus just 44 all-time record highs this year. That included the all time state record low of -38F in Mount Carroll in Illinois on January 31st.

The cold central deepened in October and pushed to the east bringing very early snow into the Midwest. October saw 3680 record daily lows, 32 all time record lows for the month and no all time record monthly highs (NOAA NCEI).

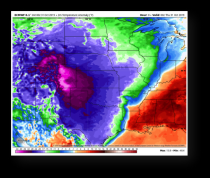

After bringing heavy snows to the Rockies and high plains the cold rolled south with temperatures 30 to 50 degrees below normal.

Enlarged

Temperatures dropped to a record of -35F at Logan County Sink in Utah and -46F in Peter’s sink, record coldest for the U.S. for the month of October.

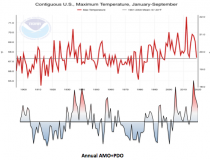

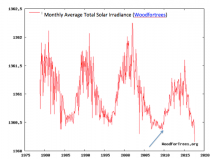

The temperatures the first 9 months have tracked the last 120 years well with multidecadal cycles in the ocean.

Enlarged

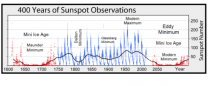

The cold also follows the solar activity. We are currently in a century or more quiet sun. In the period in and following the last 11 year cycle low (2007-2011), we had brutal cold and snow here in the U.S. and Europe.

December in 2010, the Central England Temperature (longest continuous record going back to 1659), was the second coldest December. Snow, which was forecast to be a thing of the past, instead buried the UK for long periods reminiscent of the Dalton solar Minimum of the early 1800s as evidenced by Dicken’s novels.

Enlarged

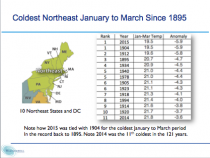

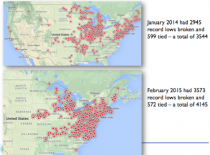

In the US, record cold and snow in the Snowmageddon Mid-Atlantic winter of 2009/10, was eclipsed with the record winters of 2013/14 and 2014/15. Which brought the coldest and snowiest winter and modern day peaks of Great Lake ice.

Enlarged

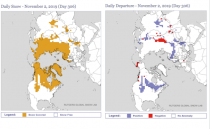

The snow in the hemisphere is increasing very rapidly and is above normal, which should expand and enhance the cold. Note how the fall record for snow extent was at record levels last fall.

Enlarged

Given the projection by Russian scientists and many in the west including some at NASA, we could be heading into a deep and long solar minimum like the Maunder Minimum with a major cooling. Whether it is a several decade Dalton like period or a Maunder, this is no time to abandon cheap, available energy.

Even in the warmer interlude we have enjoyed, cold weather kills 20 times as many people as hot weather, according to an international study analyzing over 74 million deaths in 384 locations across 13 countries.

By Joseph D’Aleo, CCM, AMS Fellow

UPDATE: See The Real Climate Crisis is Not Global Warming By Allan MacRae and Joseph D’Aleo here.

---------

I have been doing battle in the local newspaper here in NH with a warmist Shawn Freeman, who claimed I was a charlatan, a well known denier who is bought and paid for by big oil and the Koch Brothers and not a scientist or climatologist because real scientists and climatologists must follow the accepted climate theories and models and publish any challenging work in journals like Nature. I rebutted in detail here and also had a letter posted defending me and my career work by a former student who went on to get a PhD in meteorology and had a great career in the AIr Force including as meteorological support for the space shutle program. But the politically driven attack continued.

Shawn came back at me saying I was denying the long standing work of Arrhenius and cherry picking data. The paper would not let me rebut that attack on my credentials. We will do a cable show on this issue showing how the warmists work to silence dissenting voices while just riding the natural cycles in weather using the cooling in the 1970s and then the warming into the 1990s while riding the media coverage of every extreme event to push their agenda that hopes to control energy (and health) and in that way control all aspects of our lives.

I have managed Icecap for 12 years while I worked 3 different careers. We rely on you, the readers to help us just pay our costs of keeping the site going - over $500/year. I took losses in recent years though do appreciate the contributions people have given to help defer some of the costs. Please help if you can with a donation (secure PAYPAL button on the left) or an offer to co-author stories and help market the site. (jdaleo6331@aol.com). I still work 7 days/week at Weatherbell Analytics.

That second rejected rebuttal I submitted is below:

Dr. Richard Feynman, the famous Cornell physicist said in support of the scientific method “If a theory or proposed law disagrees with experiment (data), it’s wrong. In that simple statement is the key to science. It doesn’t make any difference how beautiful your guess is, it doesn’t matter how smart you are who made the guess, or what your name is… If it disagrees with data, it’s wrong. That’s all there is to it.”

I never stated CO2 was not a greenhouse gas. But it is what we call a trace gas - 0.04% by volume compared to up to 4% for water vapor, which is responsible for 95% of greenhouse effect. Warm, humid days are warm, muggy nights. Also, CO2 is close to being maxed out in terms of its minor heat trapping...Shawn’s inference is that it is unlimited. CO2 is not a thick down comforter, more like an incredibly thin gossamer sheet.

And it is beneficial gas. Dr. Craig Idso of CO2 Science which was entrusted by NOAA with the first temperature data set around 1990 and was lead author of the multi-volume NIPCC study that reviewed over 4000 peer reviewed studies noted recently “Carbon dioxide is not a pollutant and it is most certainly not causing dangerous global warming. Rather, its increase in the atmosphere is invigorating the biosphere, producing a multitude of benefits for humanity and the natural world, notwithstanding the prognostications of the uninformed.”

Charles Brooks observed in the Compendium of Meteorology (1951), that the CO2 theory of climate change, advanced by Arrhenius, “was never widely accepted and was abandoned when it was found that all the long-wave radiation absorbed by CO2 is also absorbed by water vapor.” He considered the observed rise in both CO2 and global temperatures (1920s-1950s) documented to be nothing more than a “coincidence.”

Indeed CO2 follows not leads temperatures on short and long time frames as oceans, the primary storehouse of CO2, give off CO2 when they warm, and absorb it when they cool.

Overall, the urban heat island is a far more significant anthropogenic factor than CO2. You hear it every night on local TV forecasts. It was adjusted for in the first US data set in 1989. Tom Karl Director of NCDC said if they didn’t, an artificial warming of 6F/century would result. He was pressured to remove it in version 2 a decade ago to get the appearance of warming the government wanted.

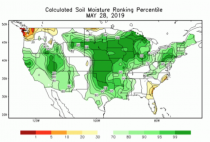

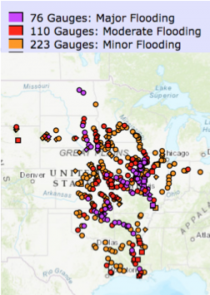

It is clear from your fiery letters, you did not read my reply nor look at the detailed analysis I linked to here prepared by experts, most from the universities and even UN IPCC scientists, that shows the 13 climate extreme claims are all wrong. Heat records have declined since the 1930s. This was the second quietest decade for landfalling hurricanes and major hurricanes since 1850. This was the quietest decade for tornadoes since tracking began in the 1950s. Sea level rises have slowed to 4 inches/century globally. Arctic ice has tracked with the 60-year ocean cycles and is similar to where it was in the 1920s to 1950s. NOAA could find no evidence of increased frequency of floods and droughts (this spring had the smallest % of US in drought on record). Snow which the university scientists here predicted would disappear, actually has set new records (fall and winter) for the hemisphere and North America, and both Boston and NYC have had more snow in the 10 years ending 2018 than any other 10 year period back to the late 1880s.

I described in my first rebuttal to Shawn (page 14 here my journey over five decades working with data and government and university scientists to understand how natural cycles in the oceans and on the sun along with volcanism drive the observed cycles in temperatures and weather globally.

I authored a book in 2002 on El Nino and La Nina for Greenwood Publishing and produced a series of 10 scientific peer reviewed papers on the effects of the ocean, solar factors and volcanism (which cools the earth by blocking sunlight) and also on the nearly impossible task of reconstructing accurate global temperature trends from very sparse imperfect data. They appeared in journals and two editions of Evidence Based Climate Science.

I developed and successfully used in industry statistical models for forecasting based on these inputs. I also participated in peer reviewed correlation studies that explained all the observed variances with natural factors with a high level of statistical significance.

One of my colleagues in response to your rebuttal wrote me “It is worth noting that the field of climatology is very new...only a couple of decades old, really. People who call themselves climatologists come from a wide array of educational backgrounds, and most of those backgrounds do not include the study, monitoring, or history of our atmosphere...According to Shawn, all you have to do is have something published in NATURE to become a beacon of unassailable credibility, which is ludicrous.”

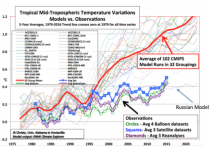

Meanwhile the greenhouse computer models are failing miserably - overstating the warming by a factor of 3. The only model that comes close to matching satellite-derived temperatures is a Russian one that minimizes the greenhouse effect and includes solar.

When warming stopped for 18 plus years. UN IPCC Lead Author Kevin Trenberth in a 2014 paper in Nature, acknowledged the ‘pause’ was explained by influences of natural factors like El Nino, ocean cycles.

Dr. Cliff Mass, UWA professor who believes as I do that both man and nature play a role in weather and climate described a group that is preventing the proper application of the scientific method to understand and prepare properly for climate changes.

He observed “they are mainly on the political left, are highly partisan, anxious and often despairing, self-righteous, big on blame and social justice, and willing to attack those that disagree with them. They often distort the truth when it serves their interests. They also see social change as necessary for dealing with global warming, requiring the very reorganization of society.”

You went ad hominem twice - attacked me as a bought-and-paid-for charlatan, when 2 trillion dollars has fed the global warmist monster not funding climate realists like me. Like my scientist friends, I chose not to give-in for monetary gains but work with my colleagues to uncover the truth so our leaders make the right decisions about energy and economic policies.

You said I cherry picked quotes using UN . I quoted what he said exactly as reported in the global press.

Petteri Taalas, the secretary-general of the World Meteorological Organization (WMO), told the Talouselama magazine in Finland that he disagrees with doomsday climate extremists who call for radical action to prevent a purported apocalypse.

Talaas said that establishment meteorological scientists are under increasing assault from radical climate alarmists who are attempting to move the mainstream scientific community in a radical direction. He expressed specific concern with some of the solutions promoted by climate alarmists, including calls for couples to have no more children.

“While climate skepticism has become less of an issue, now we are being challenged from the other side. Climate experts have been attacked by these people and they claim that we should be much more radical. They are doomsters and extremists. They make threats,” Taalas said.

“The latest idea is that children are a negative thing. I am worried for young mothers, who are already under much pressure. This will only add to their burden.”

Then there are these quotes from the recent UN climate leaders:

“In searching for a new enemy to unite us, we came up with the idea that pollution, the threat of global warming...would fit the bill.....It does not matter if this common enemy is “a real one or....one invented for the purpose.” Club of Rome, advisor to the UN “First Global Revolution” 1991

UN openly Marxist Climate Chief Christine Figueres said “Our aim is not to save the world from ecological calamity but to change the global economic system (destroy capitalism).” UN IPCC Lead Author Ottmar Edenhofer in November 2010. “One has to free oneself from the illusion that international climate policy is environmental policy.” Instead, climate change policy is about how “we redistribute de facto the world’s wealth.”

--------

My greatest fear is if they prevail with any of the Green New Deals on the table by candidates, energy costs will skyrocket. See for yourself the numbers.

Take the monthly and annual cost of energy - electricity, gasoline, heating oil/natural gas and multiple by up to a factor of 3, which has been the case in other countries that have gone down this path.

We have a small sample of this when looking at the high prices in nutty California and the northeast with their RGGI program. NH democrats are scurrying to try and explain the high costs and concluded it is because they haven’t moved fast enough with the green agenda.

A new Green Deal package could cost up to $10,000 more/year/household. Also consider that these policies of taxing corporations and working Americans to fund medicare for all, free college benefits and the costs of open borders, unemployment now at lifetime lows will skyrocket. So you will have no jobs or reduced wages and higher costs to fund the left’s wild dreams.

When they get control the globalists show their real motives- consider beautiful Ireland.

“Nudge” policies such as huge tax hikes, as well as bans and red tape outlined in the plan, will pave the way to a “vibrant” Ireland of zero carbon emissions by 2050 according to the government, which last year committed to boosting the country’s 4.7 million-strong population by a further million with mass migration.

In order to avert a “climate apocalypse”, the government plans to force people “out of private cars because they are the biggest offenders for emissions”, according to transport minister Shane Ross whose proposals - which include banning fossil fuel vehicles from towns and cities nationwide - are posed to cripple ordinary motorists, local media reports.

Launching the plan in Dublin, leader Leo Varadkar outlined his vision for an Ireland of ‘higher density’ cities consisting of populations whose lifestyles and behaviors have been totally transformed by ‘carrot and stick’ policies outlined in the climate plan.

“Our approach will be to nudge people and businesses to change behavior and adopt new technologies through incentives, disincentives, regulations, and information,” the globalist prime minister said.

Help us crush this globalist master plan before it comes here if you can.

--------

Dr. Craig Idso talks about the benefits of CO2 and the bad science that has led us to the precipice.

In recent years, governments and elected officials at all levels have proposed or enacted regulations and laws designed to restrict the use of fossil fuels via tax, caps or fiat limits on CO2 emissions. Such efforts are based upon the notion that CO2 emissions are polluting the atmosphere and causing dangerous global warming. However, nothing could be further from the truth. CO2 emissions and fossil fuel use have actually enhanced life and improved the standard of living, and will continue to do so as more fossil fuels are used…

Excellent Summary from Green Job Myths paper here.

ANDREW P. MORRISS H. Ross and Helen Workman Professor of Law & Professor of Business University of Illinois morriss@law.uiuc.edu

WILLIAM T. BOGART Dean of Academic Affairs and Professor of Economics York College of Pennsylvania wtbogart@ycp.edu

ANDREW DORCHAK Head of Reference and Foreign/International Law Specialist Case Western Reserve University School of Law andrew.dorchak@case.edu

ROGER E. MEINERS John and Judy Goolsby Distinguished Professor of Economics and Law University of Texas-Arlington meiners@uta.edu This paper can be downloaded without charge

SUMMARY

Unfortunately, the analysis provided in the green jobs literature is deeply flawed, resting on a series of myths about the economy, the environment, and technology. We have explored the problems in the green jobs analysis in depth; we now conclude by summarizing the mythologies of green jobs in seven myths about green jobs:

Myth 1: There is such a thing as a “green job.” There is no coherent definition of a green job. Green jobs appear to be ones that pay well, are interesting to do, produce products that environmental groups prefer, and do so in a unionized workplace. Yet such criteria have little to do with the environmental impacts of the jobs. To build a coalition for a far reaching transformation of modern society, “green jobs” have become a mechanism to deliver something for every member of a real or imagined coalition to buy their support for a radical transformation of society.

Myth 2: Creating green jobs will boost productive employment. Green jobs estimates include huge numbers of clerical, bureaucratic, and administrative positions that do not produce goods and services for consumption. Simply hiring people to write and enforce regulations, fill out forms, and process paperwork is not a recipe for creating wealth. Much of the promised boost in green employment turns out to be in non-productive (but costly) positions that raise costs for consumers.

Myth 3: Green jobs forecasts are reliable. The forecasts for green employment optimistically predict an employment boom, which is welcome news. Unfortunately, the forecasts, which are sometimes amazingly detailed, are unreliable because they are based on questionable estimates by interest groups of tiny base numbers in employment, extrapolation of growth rates from those small base numbers, and a pervasive, biased, and highly selective optimism about which technologies will improve. Moreover, the estimates use a technique (input-output analysis) that is inappropriate to the conditions of technological change presumed by the green jobs literature itself. This yields seemingly precise estimates that give the illusion of scientific reliability to numbers that are simply the result of the assumptions made to begin the analysis.

Myth 4: Green jobs promote employment growth. Green jobs estimates promise greatly expanded (and pleasant and well-paid) employment. This promise is false. The green jobs model is built on promoting inefficient use of labor, favoring technologies because they employ large numbers rather than because they make use of labor efficiently. In a 504 U.S. Geological Survey, CEMENT STATISTICS (2008), available here Green Jobs Myths competitive market, factors of production, including labor, earn a return based on productivity. By focusing on low labor productivity jobs, the green jobs literature dooms employees to low wages in a shrinking economy. Economic growth cannot be ordered by Congress or by the U.N. Interference in the economy by restricting successful technologies in favor of speculative technologies favored by special interests will generate stagnation.

Myth 5: The world economy can be remade based on local production and reduced consumption without dramatically decreasing human welfare. The green jobs literature rejects the benefits of trade, ignores opportunity costs, and fails to include consumer surplus in welfare calculations to promote its vision. This is a recipe for an economic disaster, not an ecotopia. The twentieth century saw many experiments in creating societies that did not engage in trade and did not value personal welfare. The economic and human disasters that resulted should have conclusively settled the question of whether nations can withdraw into autarky. The global integration of wind turbine production, for example, illustrates that even green technology is not immune from economic reality.

Myth 6: Mandates are a substitute for markets. Green jobs proponents assume that they can reorder society by mandating preferred technologies. But the responses to mandates are not the same as the responses to market incentives. There is powerful evidence that market incentives induce the resource conservation that green jobs advocates purport to desire. The cost of energy is a major incentive to redesign production processes and products to use less energy. People do not want energy; they want the benefits of energy. Those who can deliver more desired goods and services by reducing the energy cost of production will be rewarded. There is no little evidence that successful command and control regimes accomplishing conservation.

Myth 7: Wishing for technological progress is sufficient. The preferred technologies in the green jobs literature face significant problems in scaling up to the levels proposed. These problems are documented in readily available technical literatures, but resolutely ignored in the green jobs reports. At the same time, existing technologies that fail to meet the green jobs proponents political criteria are simply rejected out of hand. This selective technological optimism/pessimism is not a sufficient basis for remaking society to fit the dream of planners, politicians, patricians, or plutocrats who want others to live lives they think other people should be forced to lead.

To attempt to transform modern society on the scale proposed by even the most modest bits of the green jobs literature, such as the Conference of Mayors report, is an effort of staggering complexity and scale. To do so based on the combination of wishful thinking and bad economics embodied in the green jobs literature would be the height of irresponsibility. We have no doubt that there will be significant opportunities to develop new energy sources, new industries, and new jobs in the future. Just as has been true for all of human history thus far, we are equally confident that a market-based discovery process will do a far better job of developing those energy sources, industries, and jobs than could a series of mandates based on imperfect information.

Icecap Note: In support of the authors, we have found:

The economy in every country that has moved down an extreme green path has been hurt. Renewables are unreliable as the wind doesn’t always blow and sun shine. And don’t believe the claims millions of green jobs would result. In Spain, every green job created cost Spain $774,000 in subsidies and resulted in a loss of 2.2 real jobs. Only 1 in 10 green jobs were permanent. Industry left and in Spain unemployment rose to 27.5%.

Many households in the countries that have gone green are said to be in “energy poverty” (25% UK, 15% Germany). Their energy costs are up to 3 times higher than the U.S. The elderly are said in winter to be forced to “choose between heating and eating” and there are life-threatening blackouts. See here how electricity prices have reached a new high in Germany this week. And an end in the rising price spiral remains elusive, experts warn.

“Wholesale prices for electricity could continue to rise,” Die Welt reports. Large power producers such as RWE, warn that future plant closures due to the transition to green energies and the phasing out of the country’s nuclear power plants will “lead to a shortage” (brownouts and blackouts).

Die Welt ends its article: “The largest block on the electricity bill, however, are taxes, levies and allocations, which account for more than half of the total price.” One major price driver are the mandatory, exorbitantly high green energy feed-in tariffs that grid operators are forced to pay.

![]()

Hudson Litchfield News September 20, 2019

Joseph D’Aleo, CCM , AMS Fellow

WEATHER

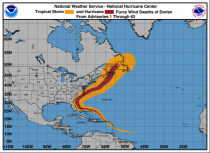

Dorian was a classic hurricane.

It’s hurricane winds savaged the northernmost Bahamas as a CAT5 storm but then as a weakening hurricane skimmed the southeast coast, southeast New England until pounding the Canadian Maritimes, often the graveyard for tropical and winter storms.

Nothing is new in weather. Great Colonial hurricanes in the northeast with storm surges up to 20 feet occurred in 1635 and 1675. In the Caribbean, the Great Hurricane of 1780 killed an estimated 27,500 people while ravaging the islands of the eastern Caribbean with sustained winds estimated to top 200 mph. It was one of three hurricanes that year with death tolls greater than 1000.

The late 1880s and 1890s were very active. 1893 had at least 10 hurricanes. Of those, 5 became major hurricanes. Two of the hurricanes caused over two thousand (2000) deaths in the United States; at the time, the season was the deadliest in U.S. history. The great Galveston hurricane in 1900 killed as many as 12,000 people as its storm surge flooded the island.

Hurricanes recurve north as soon as the opportunity presents itself. Like the ocean currents and non tropical storms, these storms help move heat north from the tropics where there is a net surplus of heat to northern latitudes where there are deficits. It this exchange did not happen, the northern areas would continue to get colder, tropical regions warmer. Sometimes the storms stall or loop if a blocking high pressure prevents their escape north. Often this happens over the open ocean. In Dorian’s case, sadly the stall occurred over the Grand Bahama and Great Abako islands with catastrophic results.

It was the 10th strongest Atlantic storm since 1900. The strongest, also a Labor Day storm, hit the Florida Keys in 1935.

Hurricane and major hurricane landfall trends have been down since the late 1800s. We had a record stretch of almost 12 years without a major landfalling hurricane before Harvey and Irma in 2017 and last year Michael. Dorian made first U.S. landfall in North Carolina but was no longer a major storm.

We have passed the peak of the hurricane season (September 10) and chances of a major landfall is declining quickly. If no further hurricane or major hurricane makes landfall, this decade would be the second quietest decade for hurricanes (behind 1970s) and second quietest for major hurricanes (behind the 1860s).

Should we worry here in New England? Yes, we should increase the awareness of the real threats and ways to protect your home and family.

Thanks to Hudson Cable TV, we have been given the chance to produce a series on the great hurricanes of the past called “Preparing for the Inevitable” with Joe Bastardi, his dad Matt and son Garrett, meteorologist Herb Stevens and storm chasing meteorologist Ron Moore (who last week was in Dorian’s outer bands).

We are very concerned about the inevitable return of a storm like the CAT3 Hurricane of ‘38, which fell 2 billion trees in the northeast as it traveled at 47 mph on a southeast to northwest path through New England. Matt Bastardi was in that hurricane as a boy in Providence, RI. He and I were both survived Hurricane Carol in 1954. Parts I and II were meteorological reviews of the great storms, Part III with FLASH’s Leslie Chapman-Henderson provided recommendations about how you should prepare here for the eventual return of a big one that could damage your home and keep us in the dark for many weeks even as winter comes on. Part IV has been added where we discuss and show how we can prepare for a storm like that. They also apply to the ice storms we get that can bring down trees and put us in the dark for many days. The shows cycle on cable but can be found here anytime.

SHOW 1 here.

SHOW 2: here

SHOW 3: here

Show 4: here

CLIMATE

Do we have an existential threat due to climate change as the seven hour CNN climate change scareathon and the candidates now barnstorming the state are claiming. The answer is an absolute NO. In the 1970s we were told because of population growth, climate stress (then cold), insufficient energy and crop failures within a decade, 100,000s of millions would die, tens of millions in the U.S. and it was too late to stop. Even as each dire forecast failed, the scares continued, with the date just push forward - 2000, 2020, and now 2030. BTW, they quietly removed snow and ice covered signs at Glacier National Park this season that said, “Warning: glaciers will be gone by 2020.”

We put together a team of scientific experts and looked at the 12 most commonly reported claims and found them all unfounded see .

Instead, as energy production increased and prosperity improved, global extreme weather related losses as a function of GDP declined 50% the last 3 decades and here in the U.S., there was a 98% decline in extreme weather deaths in the 20th century.

In the U.S., with low cost energy, lowered taxes and reduced unnecessary regulations, we now have the lowest unemployment for the nation, for blacks, hispanics, women, young people in decades or history and for the first time in a long time significant wage increases! Here in NH, we have the lowest unemployment in the nation. The U.S. is energy independent, a long time thought unachievable goal. Our air and water is cleanest in our lifetimes well below the tough standards we out in places decades ago.

The real existential threat comes would come from radical environmentalism and the prescribed remedies. The scare is not based on fact but is politically driven, all about big government and control over every aspect of your life. They assume as Jonathan Gruber of MIT, an Obama advisor of health care, opined most people are ‘stupid’ and will believe what you say especially if the media provides an echo chamber. Rep. Alexandria Ocasio-Cortez’s chief of staff recently admitted that the Green New Deal was not conceived as an effort to deal with climate change, but instead a “how-do-you-change-the-entire economy thing"-- nothing more than a thinly veiled socialist takeover of the U.S. economy.

“The interesting thing about the Green New Deal is it wasn’t originally a climate thing at all, Saikat Chakrabarti said in May. He was echoing what the climate change head of the UN climate chief and the UN IPCC Lead Author said - that is was our best chance to change the economic system (to centralized control) and redistribute wealth (socialism).